Looking Outwards

Eunoia collects the artist’s brain using an EEG sensor and then uses them to vibrate 5 bowls of water. Using Processing and MaxMSP, each bowl of water is receiving the frequencies of her brain activity (Alpha, Beta, Delta, Gamma, Theta) as well as eye movement. This is visualizing data that is usually difficult to grasp by the average person.

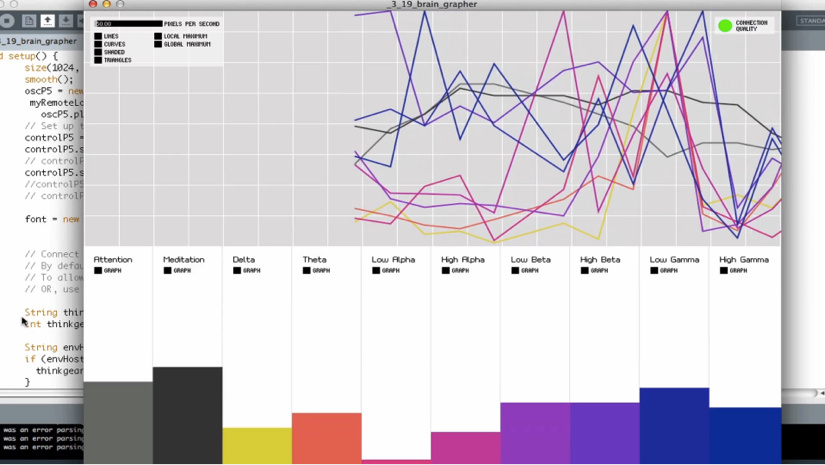

This is the data she is using as a graph, and it seems incomprehesible. Elements by Britzpetermann

Elements by Britzpetermann

Straightforwardly Elements is an interactive walkway. One of four elements will build up where the subject puts their hands. Because of its simplicity the experience of Elements relies heavily on its visuals, and the graphics aren’t as natural looking as they could be. Looking at Elements you are very aware that the images are computationally generated. It is very difficult to re-create something as magnificent and beautiful as natural fire and sand dunes, and not seem lame compared to the real thing.

Similarly to Elements, Equilibrium becomes disturbed when touched and settles to a calmer state when left alone, except with this project the visuals are stellar. It is very complex, but soothing at the same time, all in very good taste. Interestingly, I read from Memo Akten’s website that it was the wild landscapes of Madagascar which served as inspiration for this project, which adds a whole new frame. Equilibrium creates its own platform by becoming something that is incomparable to anything in nature, yet still reflects natural behavior.