Face Seismograph

Soliciting participants on Facebook – my original scheme for the final project

Soliciting participants on Facebook – my original scheme for the final project

I planned to print screenshots out and frame them like so

I planned to print screenshots out and frame them like so

As is often the case in art, my project to capture the things that make us smile turned out to have been implemented a year before by Brooklyn artist Kyle McDonald. The embarrassing part of this is that I – unknowingly – used Kyle’s library to make my project.

In any case, this initial attempt/failure emboldened me to try something more nuanced with faces. I wanted to consider a continuum of expressions as opposed to a binary smile-on smile-off.

Face Seismograph

Face Seismograph is a tool for recording and graphing states of excitement over time. It was written in OpenFrameworks using Kyle McDonald’s ofxFaceTracker addon.

Excited?

Excited?

Excited!

Excited!

The seismograph measures excitement by tracking the degree to which one smiles or moves their eyebrows from a resting state.

One limitation of this approach is that in practice, internal states of excitement or arousal may not have corresponding facial expressions.

Genuinely excited

Genuinely excited

Depressed

Depressed

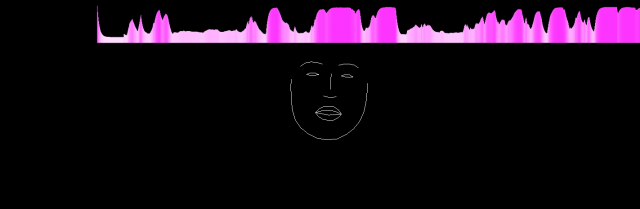

I staged a casual conversation between myself and a friend. While we chatted about life, two instances of Face Seismograph approximated and recorded the intensity of our excitement. Viewing the history of our facial expressions, I began to notice surprising rhythms of expression.

To present this conversation, I play each recording on a separate iMac. The two recordings are synchronized via OSC. A viewer can scrub through the video on both computers simultaneously.

In a future iteration of this project, I’d like to highlight the comparison of excitement signatures with greater clarity. Also, I need to label my axes.

Would be really cool if you could include a sound component

like the name – face seismograph

maybe interesting to measure other bio data in real time and map at the same time –

it’s very difficult to follow both seismographs at once in sync—can’t focus your eye in both places. did you consider overlaying them? would also work well if you color coded faces to match

if you’re trying to look at expressions during conversations, I think the design for that should be different from the design for one that you watch at the top of your computer screen—as a mirror

there’s a tension between the nature of this project as an installation, and as a visualization.

As an installation, it wants to be minimalistic. As a visualization, it wants to better support comparisons — and have things like labeled axes, tick marks, multiple parallel timelines.

I recommend having the sound from the conversation, perhaps filtered so you can’t quite make out the words.

data looks like landscape, could be used for making things, more interaction, dynamically created world

“different patterns” it would be cool if the patterns can be exported into pdf, etc(I mean the waves that appear on the top)

aside from a vague sense of interest, this doesn’t make me *feel* anything or provoke a lot of thought

I would like to see this combined with your earlier app that hears words and displays them on the screen. maybe show which words appeared when the people were most interested or least interested. Maybe you could find a pattern like, if someone says “I” too much, the other person becomes less interested.