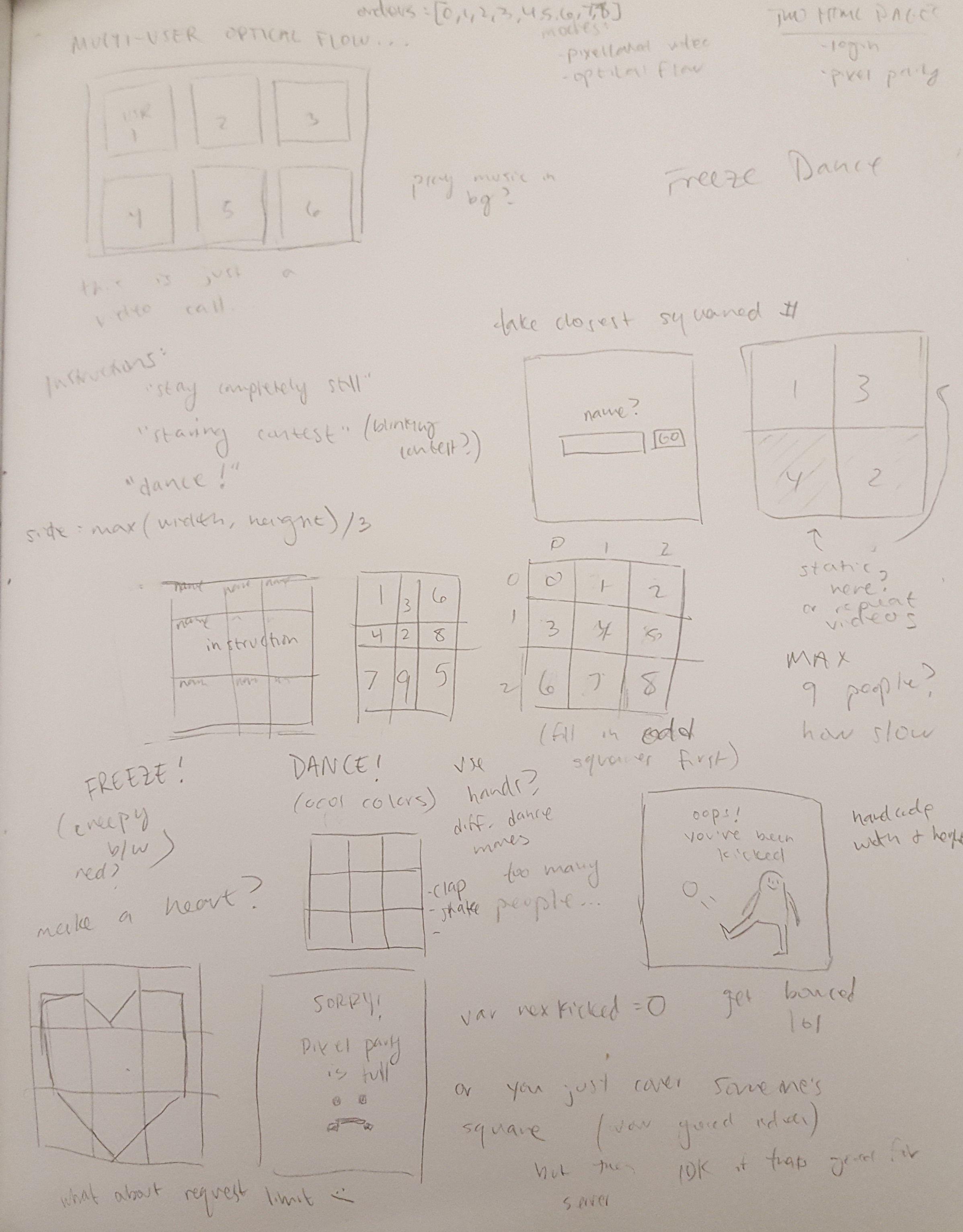

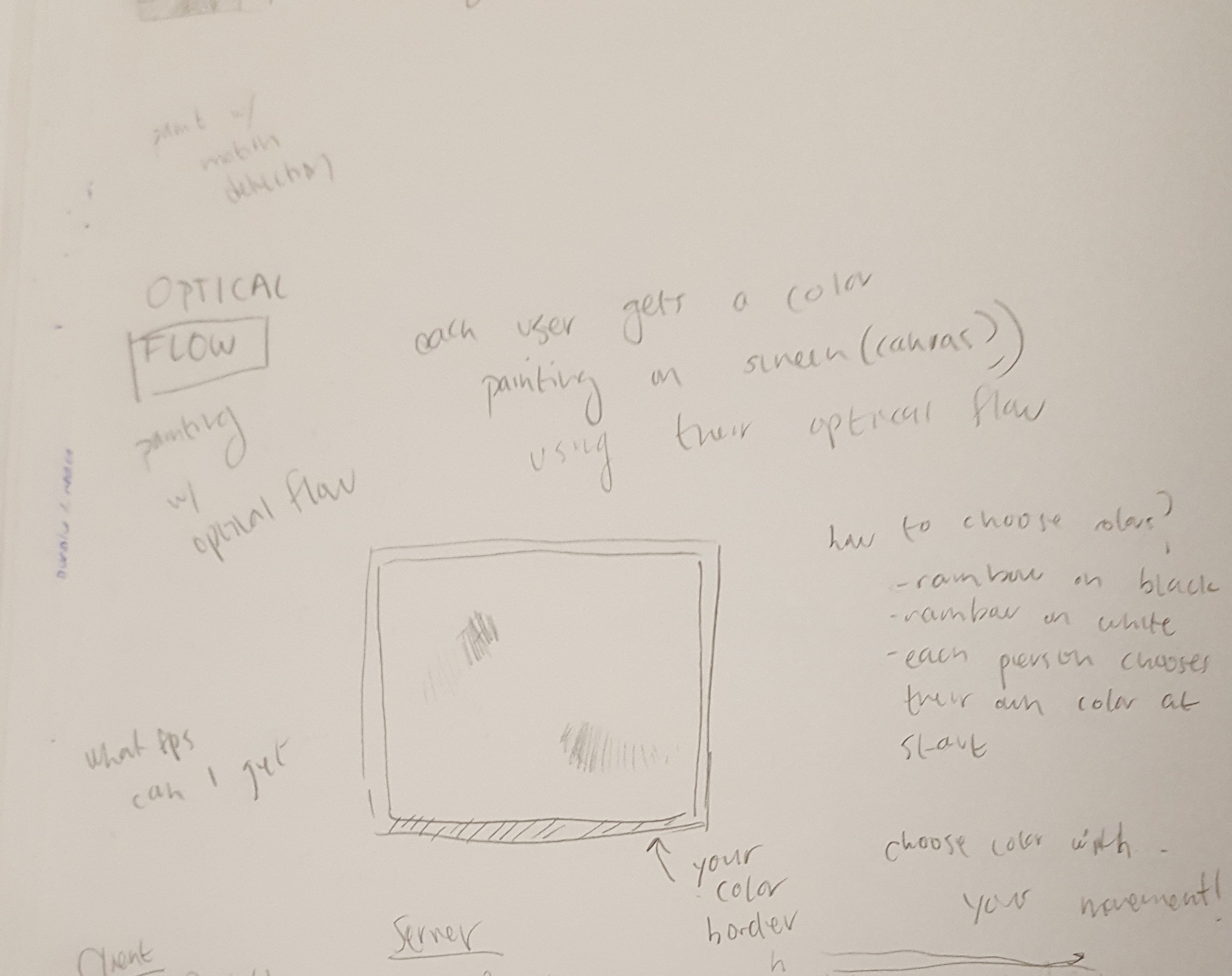

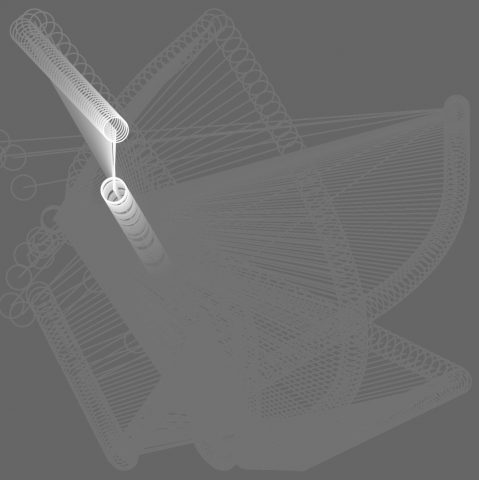

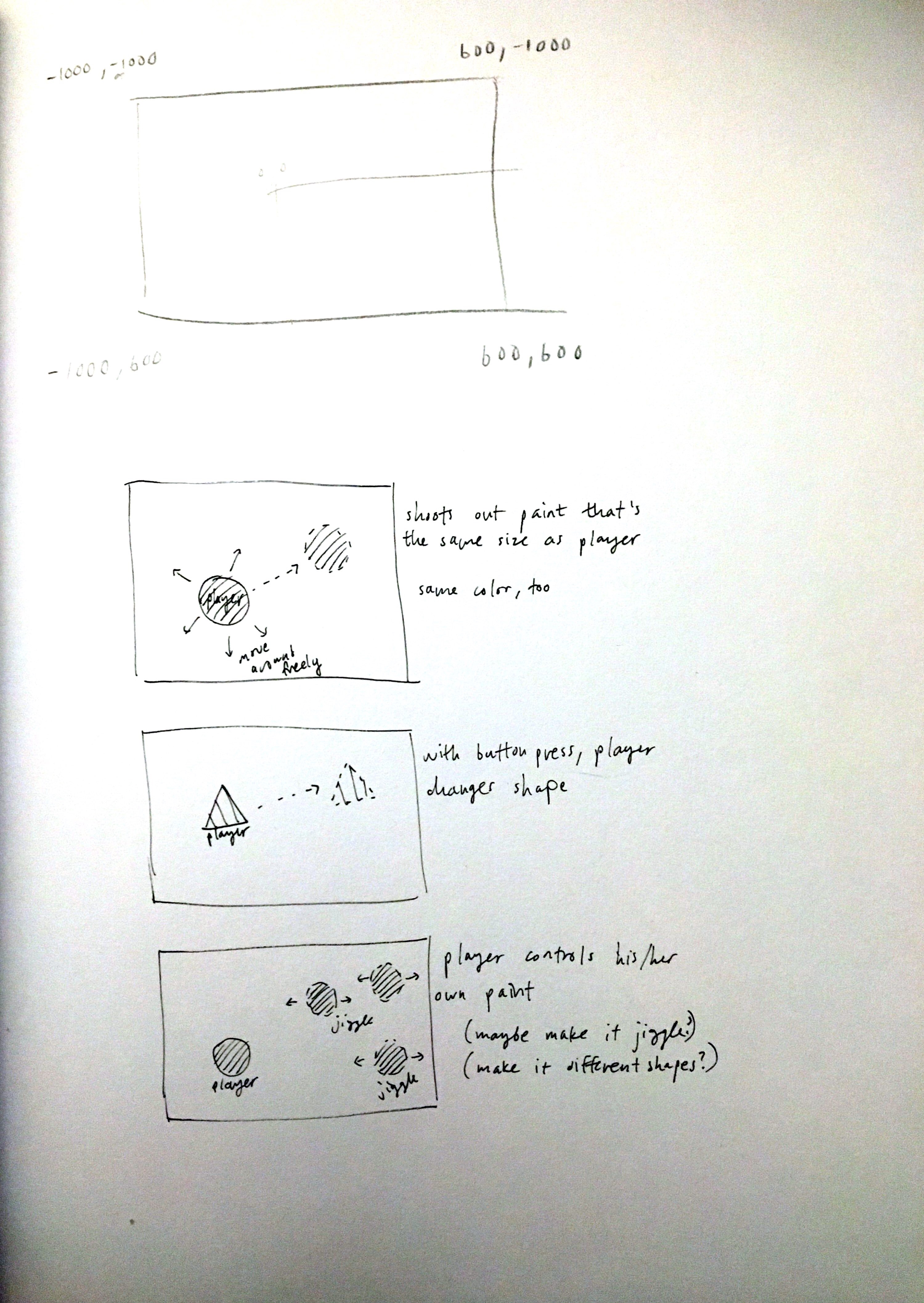

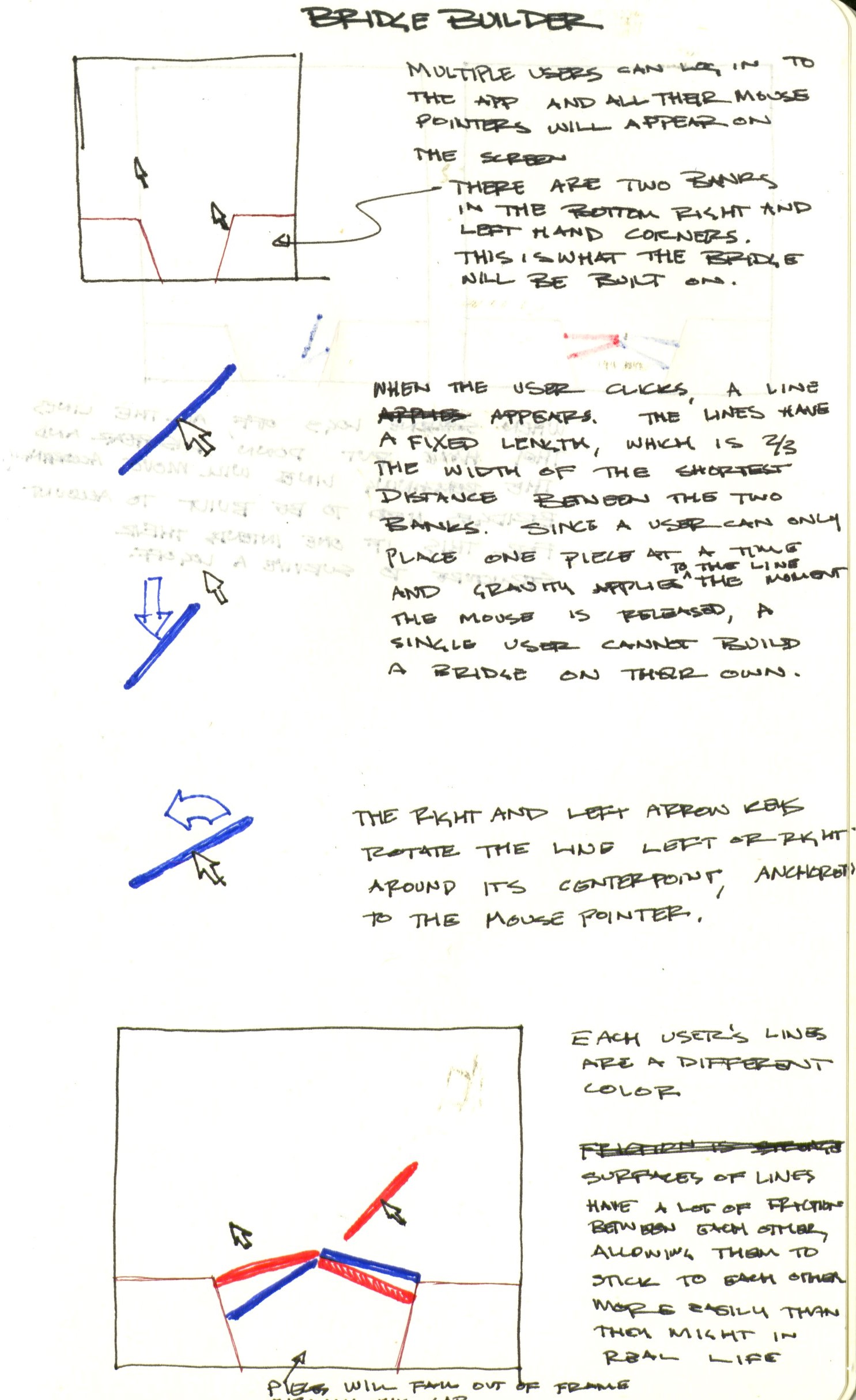

This project was intended to be a collaborative bridge builder. When the user presses and holds the mouse, a line appears, centered on the user's mouse, that is two-thirds the size of the gap between the two ground banks. When the mouse is released, physics will begin applying to the line and it will continue to fall downwards unless it is obstructed by something. Since a user can only place one line at a time and no line is the width of the gap, a single user cannot build a bridge by themselves. In order to build a bridge, there must be multiple users collaborating. When the user logs off, though, all the lines they have set down disappear. Thus, if a user wants a bridge that will survive if all the other users logoff, they will have to lay their lines in such a way that they would be able to support themselves without the other user's presence.

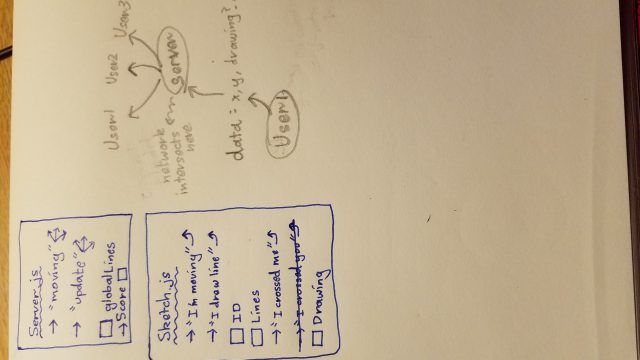

Unfortunately, I was unable to get the application to work. I attempted to use glitch.js to allow for the multiple users to interact with the same scene and p2 as the physics engine. Having trouble with the interactivity and physics, I attempted to simplify the project by having the users stack blocks instead of build bridges. While this simplified the interactivity and graphics little bit, it still involved figuring out how to use glitch and p2 together. Because of the server-side preference in glitch, I was unable to get the physics engine in p2 to work properly, as it has to run in the server rather than the browser. In order to get this to work, I would have had to take the mouse data from each user in the browser, send it to the server, which would then create the rigid bodies for the lines and calculate their interactions, and then send all of that data back to the browser. In addition to getting the code to compile with the p2 library, I was unable to figure out how to code the transfer of all this data back and forth in this manner.

While it does not compile, I have attached the app: