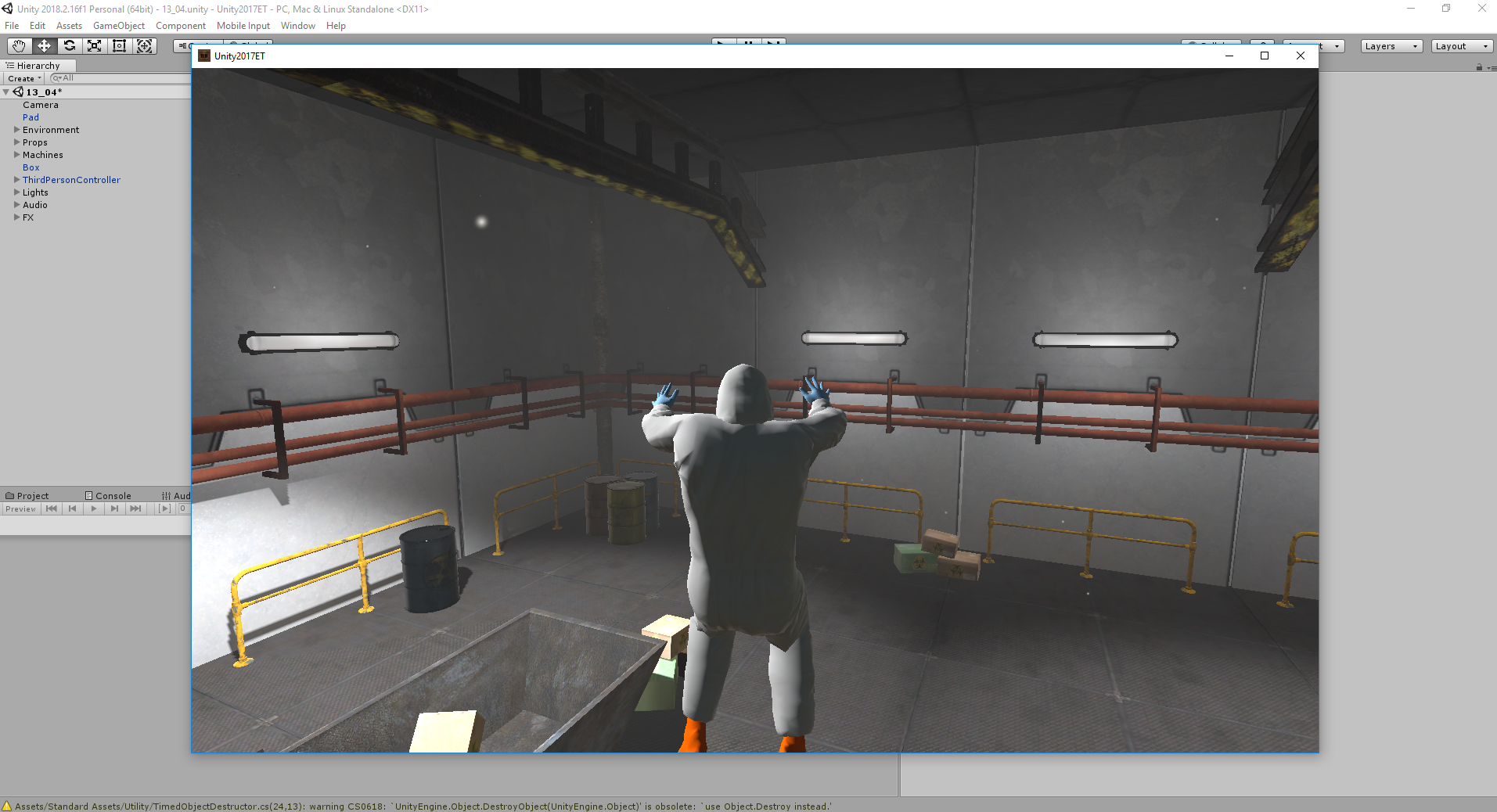

//starter code for loading BVH from https://github.com/mrdoob/three.js/blob/master/examples/webgl_loader_bvh.html

var clock = new THREE.Clock();

var camera, controls, scene, renderer;

var mixer, skeletonHelper, boneContainer;

var head, lhand, rhand, torso, rfoot, lfoot;

var miscParts = [];

var limbs = [

new THREE.BoxGeometry( 10, 10, 10 ),

new THREE.SphereGeometry( 10, 20, 15 ),

new THREE.ConeGeometry( 10, 10, 30 ),

new THREE.CylinderGeometry( 10, 10, 10, 5 ),

new THREE.TorusGeometry( 7, 3, 10, 30 ),

new THREE.TorusKnotGeometry( 7, 3, 10, 30 ),

new THREE.DodecahedronGeometry(7)

]

var bodies = [

new THREE.BoxGeometry( 30, 60, 30 ),

new THREE.SphereGeometry( 30, 20, 15 ),

new THREE.ConeGeometry( 30, 60, 30 ),

new THREE.CylinderGeometry( 20, 30, 50, 5 ),

new THREE.TorusGeometry( 20, 10, 10, 30 ),

new THREE.TorusKnotGeometry( 20, 10, 10, 30 ),

new THREE.DodecahedronGeometry(20)

]

var colorArray = [new THREE.Color(0xffaaff), new THREE.Color(0xffaaff), new THREE.Color(0xffaaff), new THREE.Color(0xffaaff), new THREE.Color(0xffaaff)];

var uniforms = {u_resolution: {type: "v2", value: new THREE.Vector2()},

u_colors: {type: "v3v", value: colorArray}};

var materials = [

new THREE.ShaderMaterial( {

uniforms: uniforms,

vertexShader: document.getElementById("vertex").textContent,

fragmentShader: document.getElementById("stripeFragment").textContent,

}),

new THREE.ShaderMaterial( {

uniforms: uniforms,

vertexShader: document.getElementById("vertex").textContent,

fragmentShader: document.getElementById("gradientFragment").textContent,

}),

new THREE.ShaderMaterial( {

uniforms: uniforms,

vertexShader: document.getElementById("vertex").textContent,

fragmentShader: document.getElementById("plainFragment").textContent

}),

new THREE.ShaderMaterial( {

uniforms: uniforms,

vertexShader: document.getElementById("vertex").textContent,

fragmentShader: document.getElementById("plainFragment").textContent,

wireframe: true

})

]

init();

animate();

var bvhs = ["02_04", "02_05", "02_06", "02_07", "02_08", "02_09", "02_10", "pirouette"]

uniforms.u_resolution.value.x = renderer.domElement.width;

uniforms.u_resolution.value.y = renderer.domElement.height;

var loader = new THREE.BVHLoader();

loader.load( "models/" + random(bvhs) + ".bvh", createSkeleton);

function createSkeleton(result){

skeletonHelper = new THREE.SkeletonHelper( result.skeleton.bones[ 0 ] );

skeletonHelper.skeleton = result.skeleton; // allow animation mixer to bind to SkeletonHelper directly

boneContainer = new THREE.Group();

boneContainer.add( result.skeleton.bones[ 0 ] );

var geometry = new THREE.BoxGeometry( 10, 10, 10 );

head = new THREE.Mesh( random(limbs), materials[0] );

lhand = new THREE.Mesh( random(limbs), materials[0] );

rhand = new THREE.Mesh( random(limbs), materials[0] );

lfoot = new THREE.Mesh( random(limbs), materials[0] );

rfoot = new THREE.Mesh( random(limbs), materials[0] );

torso = new THREE.Mesh( random(bodies), materials[0] );

// torso.scale.set(Math.random() * 1.5, Math.random() * 1.5, Math.random() * 1.5);

skeletonHelper.skeleton.bones[4].add(head);

skeletonHelper.skeleton.bones[12].add(rhand);

skeletonHelper.skeleton.bones[31].add(lhand);

skeletonHelper.skeleton.bones[50].add(rfoot);

skeletonHelper.skeleton.bones[55].add(lfoot);

skeletonHelper.skeleton.bones[1].add(torso);

for(var i=9; i<14; i++){

var part = new THREE.Mesh( new THREE.BoxGeometry( Math.random() * 10, Math.random() * 5, Math.random() * 5 ), materials[0] );

miscParts.push(part);

skeletonHelper.skeleton.bones[i].add(part);

}

for(var i=28; i<31; i++) {

var part = new THREE.Mesh( new THREE.BoxGeometry( Math.random() * 10, Math.random() * 5, Math.random() * 5 ), materials[0] );

miscParts.push(part);

skeletonHelper.skeleton.bones[i].add(part);

}

for(var i=47; i<56; i++) {

var part = new THREE.Mesh( new THREE.BoxGeometry( Math.random() * 10, Math.random() * 5, Math.random() * 5 ), materials[0] );

miscParts.push(part);

skeletonHelper.skeleton.bones[i].add(part);

}

scene.add( skeletonHelper );

scene.add( boneContainer );

skeletonHelper.material = new THREE.MeshBasicMaterial({

color:"white",

transparent:"true",

opacity:"0.0"});

mixer = new THREE.AnimationMixer( skeletonHelper );

mixer.clipAction( result.clip ).setEffectiveWeight( 1.0 ).play();

changeName();

}

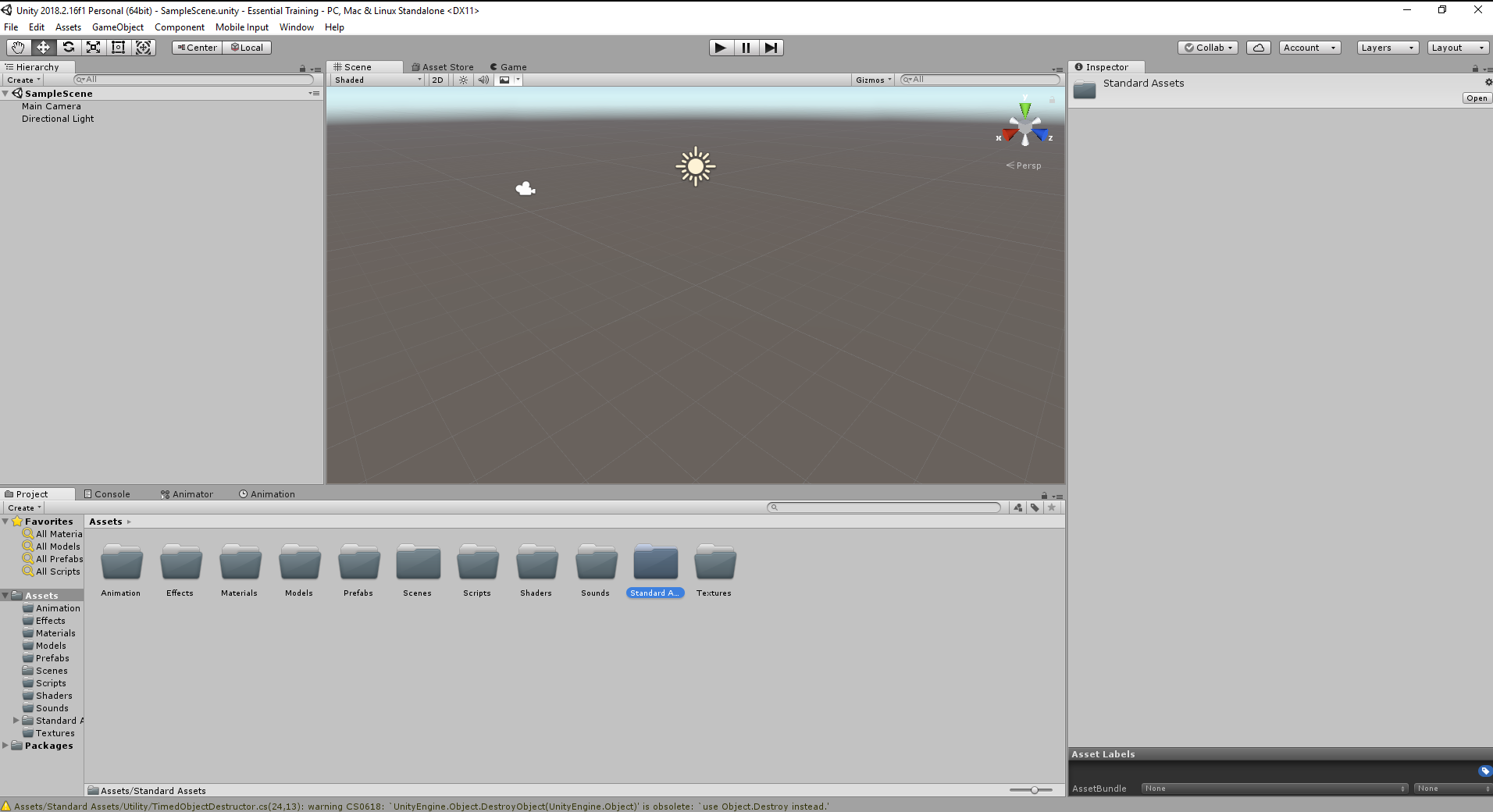

function init() {

camera = new THREE.PerspectiveCamera( 60, window.innerWidth / window.innerHeight, .1, 1000 );

camera.position.set( 0, 200, 400 );

scene = new THREE.Scene();

scene.add( new THREE.GridHelper( 400, 10 ) );

scene.background = new THREE.Color(0xdddddd);

renderer = new THREE.WebGLRenderer( { antialias: true } );

renderer.setPixelRatio( window.devicePixelRatio );

renderer.setSize( window.innerWidth, window.innerHeight );

document.body.appendChild( renderer.domElement );

controls = new THREE.OrbitControls( camera, renderer.domElement );

controls.minDistance = 300;

controls.maxDistance = 700;

window.addEventListener( 'resize', onWindowResize, false );

}

function onWindowResize() {

camera.aspect = window.innerWidth / window.innerHeight;

camera.updateProjectionMatrix();

renderer.setSize( window.innerWidth, window.innerHeight );

}

function animate() {

requestAnimationFrame( animate );

var delta = clock.getDelta();

if ( mixer ) mixer.update( delta );

renderer.render( scene, camera );

}

function changeBody(seed) {

console.log(seed);

Math.seedrandom(seed);

color = random(colors);

for(var i = 0; i < 5; i++){

colorArray[i] = new THREE.Color(color[i]);

}

for(var i = 0; i < materials.length; i++){

materials[i].clone(); //idk why i do this but its the only way to make randomness match with class.html

}

lhand.geometry = random(limbs);

lhand.material = random(materials);

rhand.geometry = random(limbs);

rhand.material = random(materials);

torso.geometry = random(bodies);

torso.material = random(materials);

lfoot.geometry = random(limbs);

lfoot.material = random(materials);

rfoot.geometry = random(limbs);

rfoot.material = random(materials);

head.geometry = random(limbs);

head.material = random(materials);

head.scale.set(2, 2,2);

for(var i=0; i<miscParts.length; i++){

miscParts[i].geometry = new THREE.BoxGeometry( Math.random() * 15, Math.random() * 15, Math.random() * 10 );

miscParts[i].material = random(materials);

}

} function changeName() {

changeBody(document.getElementById("nameInput").value);

}

function random(arr) {

return arr[Math.floor(Math.random() * arr.length)];

}

function changeBvh(){

scene.remove(skeletonHelper);

scene.remove(boneContainer);

loader.load( "models/"+ random(bvhs) +".bvh", createSkeleton);

} |

Daniel Rozin has created many mechanical "mirrors" using video cameras, motion sensors, and motors to display people's reflections. I had seen the popular

Daniel Rozin has created many mechanical "mirrors" using video cameras, motion sensors, and motors to display people's reflections. I had seen the popular