This set of Deliverables (#5) has three parts, with different deadlines.

- Looking Outwards #03 (Due 10/5)

- Browsing Three.js Examples (Due 10/5, but with no deliverable)

- The Augmented Body (Due 10/12)

1. Looking Outwards #03 (Due 10/5)

For this Looking Outwards report (as previously discussed), you are asked to identify and discuss a project which presents a specific form of interactivity that you find interesting. Full details for this deliverable can be found here.

2. Browsing the Three.js Examples (Due 10/5)

There are many different creative coding toolkits -- far more than can be learned in a single semester. Still, it's extremely helpful to be aware of what's out there. For this task, you are asked to spend 15 minutes browsing the example projects for Three.js, a code library by Ricardo Cabello which is widely used around the world for 'spectacular' 3D graphics in the browser. I recommend you check out at least a dozen examples, preferably more. There is no deliverable, but please complete this Part by October 5.

3. The Augmented Body (Due 10/12)

For this project, you are asked to write software which creatively interprets (or responds to) the actions of the body, as observed by a camera.

You are asked to develop a computational treatment for motion-capture data captured from a person's body. You may create an ANIMATION (by using pre-recorded motion capture data), or you may create an INTERACTION (by using real-time motion capture information). Template code is provided for both scenarios, below.

Ten Creative Opportunities

The following ten suggestions, which are by no means comprehensive, are intended to prompt you to appreciate the breadth of the conceptual space you may explore. In all cases, be prepared to justify your decisions.

- You may work in real-time (interactive), or off-line (animation). You may choose to develop a piece of interactive real-time software (as in Setsuyakurotaki, by Zach Lieberman + Rhizomatiks, shown below). Or you may choose to develop a piece of custom animation software, which interprets the motion capture file as an input to a rendering process (as in Universal Everything's Walking City).

- You may use more than one body. Your software doesn't have to be limited to just one body. Instead, it could visualize the relationship (or create a relationship) between two or more bodies (as in Scott Snibbe's Boundary Functions or this sketch by Zach Lieberman). It could visualize or respond to a duet, trio or even a crowd of people. It could visualize the interactions of multiple people's bodies, even across the network (for example, one of Char's templates transmits shared skeletons, using PoseNet in a networked Glitch application.)

- You may focus on just part of the body. Your software doesn't need to respond to the entire body; it could focus on interpreting the movements of a single part of the body (as in Theo Watson & Emily Gobeille's prototype for Puppet Parade, which responds to a single arm). Some of the provided templates (PoseNet, BVH) provide information about the full body, while others (FaceOSC, clmTracker) provide information about the face.

- You may focus on how an environment is affected by a body. Your software doesn't have to re-skin or visualize the body. Instead, you can develop an environment that is affected by the movements of the body (as in Theo & Emily's Weather Worlds).

- You may position your 'camera' anywhere -- including a first-person POV, or with a (user-driven) VR POV. Just because your performance was recorded from a sensor "in front" of you, this does not mean your mocap data must be viewed from the same point of view. Consider displaying your figure in the round, from above, below, or even from the POV of the body itself. (Check out the camera() function in Processing, or the PerspectiveCamera object in three.js, for more ideas.)

- You may work in 3D or 2D. Although BVH mocap data represents three-dimensional coordinates, you don't have to make a 3D scene; for example, you could use your data to control an assemblage of 2D shapes. You could even use your body to control two-dimensional typography. (Helpful Processing commands like screenX() and screenY() , or the unprojectVector() command in three.js, allow you to easily compute the 2D screen coordinates of perspectivally-projected 3D points.)

- You may control the behavior of something non-human. Just because your data was captured from a human, doesn't mean you must control a human. Consider using your data to puppeteer an animal, monster, plant, or even a non-living object (as in this research on "animating non-humanoid characters with human motion data" from Disney Research).

- You may record motion capture data yourself, or you can use data from an online source. If you're recording the data yourself, feel free to record a friend who is a performer -- perhaps a musician, actor, or athlete. Alternatively, feel free to use data from an online archive or commercial vendor, such as mocapdata.com, ACCAD, CMU Graphics Lab, etc.

- You may make software which is analytic or expressive. You are asked to make a piece of software which interprets the actions of the human body. While some of your peers may choose to develop a character animation or interactive software mirror, you might instead elect to create "information visualization" software that presents an ergonometric analysis of the body's joints over time. Your software could present comparisons different people making similar movements, or could track the accelerations of movements by a violinist. You could create quantitative comparisons of people's different gaits.

- You may use sound. Feel free to play sound which is synchronized with your motion capture files. This might be the performer's speech, or music to which they are dancing, etc. (Check out the Processing Sound Library or p5.js Sound Library.)

Technical Options & Resources

Template Code for Offline Mocap

Code templates for loading and displaying motion capture files in the BVH format have been provided for you in three different programming environments: Processing (Java), openFrameworks v.0.98 (C++), and three.js (a JavaScript library for high-quality OpenGL 3D graphics in the browser). You can find these templates collected together here: https://github.com/CreativeInquiry/BVH-Examples

We have purchased a copy of Brekel Pro Body v2 for you to use to record motion capture files, and we have installed it on a PC ("Veggie Dumpling") in the STUDIO; it can record Kinectv2 motion capture data into BVH and other formats. Make an arrangement with Golan or Char to make a recording. If you use pre-recorded mocap data, then ideally, the visual treatment should be designed for specific motion-capture data, and the motion-capture data should be intentionally selected (or performed) for your specific visual treatment.

As an alternative, you can develop a creative software augmentation to a pre-recorded video, using this template combinding p5.js, ml5.js, and PoseNet. An example is shown below.

Processing BVH player template:

p5.js with ml5.js PoseNet on pre-recorded video, template code. (zipped template here.)

To run this, run a local server using Python3, using the terminal commands below; then open the URL, http://0.0.0.0:8000/

> cd PoseNet_video > python -m http.server

For Python 2.7, use this command instead:

> cd PoseNet_video > python -m SimpleHTTPServer 8000

(If those commands don't work, see this web page for more options.)

Template Code for Realtime Mocap

- ml5.js with PoseNet + WebCam

- ml5.js with PoseNet + WebCam at Editor.p5js.org

- ml5.js with PoseNet + WebCam at Glitch

- ml5.js with PoseNet + WebCam + Networking at Glitch

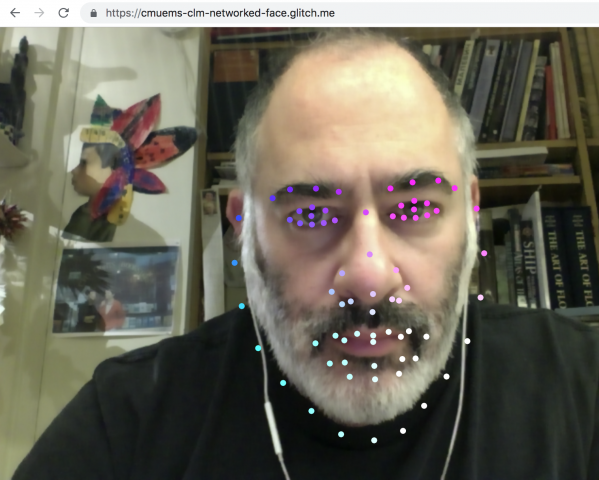

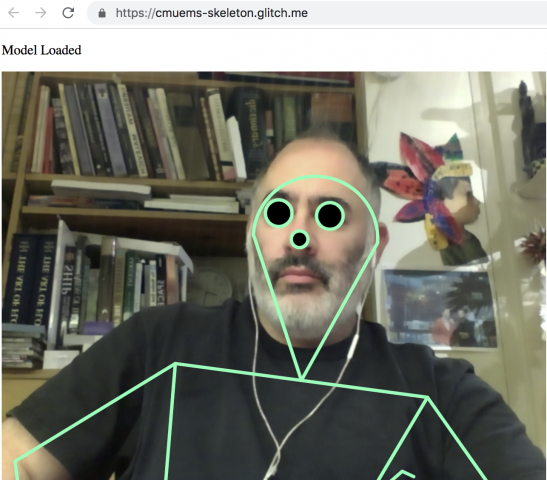

- p5.js with clmTracker at Glitch

- p5.js with clmTracker at Editor.p5js.org

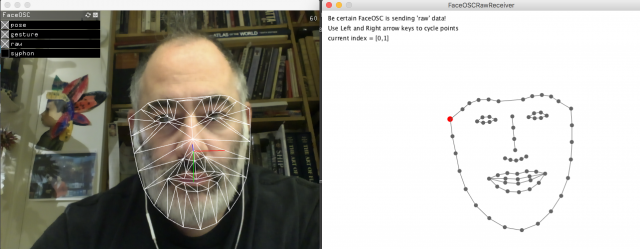

- Processing with FaceOSC

Processing with FaceOSC template:

p5.js with Glitch + clmTracker template:

p5.js with Glitch + ml5.js PoseNet webcam template:

Summary of Deliverables

Here is the checklist of expected components for this assignment.

- Sketch first! Draw some ideas. Study people moving.

- Develop a program that creatively interprets, or responds to, a human body.

- Create a blog post on this site to document your project and contain the media below.

- Title your blog post, nickname-Body, and give your blog post the WordPress Category, 05-Body.

- Write a narrative of 150-200 words describing your development process, and evaluating your results. Discuss the relationship between your specific motion file, and your treatment. Which came first?

- Embed a screen-grabbed video of your software running.

- Embed an animated GIF (or two) of your software.

- Embed a still image of your software.

- Embed some photos or scans of your notebook sketches.

- Embed your code attractively in the post.

- Test your blog post to make sure that all of the above embedded media appear correctly.

Remember, if you're having a problem, ask for help!

Some more fun things to consider:

Face Pinball: Use your face to play our new pinball-inspired game!

-- Nexus Studios (@nexusstories) July 30, 2018