Assignment 07

DUE: TUESDAY OCTOBER 15

This assignment has 2 parts:

- A simulation of a living creature or ecosystem;

- An augmented projection in which a simulation relates to a real place.

Assignment-07-Life

Software makes possible the simulation, rather than mere representation, of the world around us. In this project you will model a creature or organic system by developing an interplay of simulated forces.

In this assignment, you will use simulation to create a creature or system of creatures.

- For example, you might make a swarm of bees that respond to the cursor and seek flowers.

- Or, you might make a blobby octopus that uses spring forces to create stretchy, bouncy tentacles.

- Or, you might model a spider’s web as a mesh of connected springs, subject to forces of gravity, wind, and the jiggling of insects that get stuck to it.

For inspiration, have a look at some of these creatures:

- A-Volve (Christa Sommerer & Laurent Mignonneau) [1][2]

- Nokia Friends (Toxi + Universal Everything)

- Processing Monsters (Lukas Vojir et al.)

- Singlecell.org and Doublecell.org (Various)

- Creatures (Lee Byron, CMU’08)

- A Confidence of Vertices (Brandon Morse)

- Sniff interactive dog (Karolina Sobecka & Jim George)

- Evolved Virtual Creatures (1994, Karl Sims)

- Steering Behaviors (Craig Reynolds)

- Birds! (Robert Hodgin / Flight404)

For this project you are asked to create a creature, living thing, or ecology which generates form and/or behavior, algorithmically, and (with strong encouragement) through some form of simulation. Your job, Dr. Frankenstein, is to create new life — such as an interactive and sensate creature, a dynamic flock or swarm, an artificial cell-culture, a novel plant, etc.

Please give some consideration to the potential your software can have to operate as a cultural artifact. Can it somehow attain special relevance by generating things which address a real human need or interest?

Now, for this assignment:

- Sketch first.

- Create a system as described. (Optionally: Does it relate to the cursor? Does it respond to keypresses?)

- Using ScreenFlick or other screen-capturing software, record 20-60 seconds of video documenting your project. Upload this to YouTube.

- If your project doesn’t require any special libraries, upload it to OpenProcessing.

- In a blog post, embed your YouTube video.

- Also in the blog post, embed your OpenProcessing applet.

- Also, in the blog post, include some scans of your sketches, and a 100-word discussion of your project.

- Label your blog post with the category, Assignment-07-Life.

Assignment-07-AugmentedProjection

In this assignment, your challenge is to create an augmented projection that relates to a specific location.

First, please familiarize yourself with contemporary idiomatic uses of augmented projections. Here are some examples, below. Keep in mind that some of these are sophisticated projects made by very experienced practitioners. You have to start somewhere.

- Pablo Valbuena, Augmented Sculpture series (an early example)

- Christopher Baker, Architectural Integration Tests (nice explanation)

- Motionographer, Building Projection Roundup

- YesYesNo, Night Lights

- Todo, Artificial Dummies

- Julapy, Pachinko at Sydney Festival

- Benjamin Gaulon (Recyclism), DePong

- Sh.Pixel, Physical Projection Project

- Andreas Gysin + Sidi Vanetti, Piastrelle, Barre, Casse

- Hellicar & Lewis, Hello Wall

- Michael Guidetti, augmented paintings

- Re (Projector self-projection)

- Eric J. Forman, Perceptio Lucis

- See also works by: Krzystof Wodiczo

- BOX by Bradley G’Munk et al.: Box

- AntiVJ’s Omicron

In your mind, contrast the simple and clever approach of Gysin+Vanetti’s Casse with the high-budget, visual-effects-heavy approach of AntiVJ’s Omicron.

Conceptual Overview of this Assignment

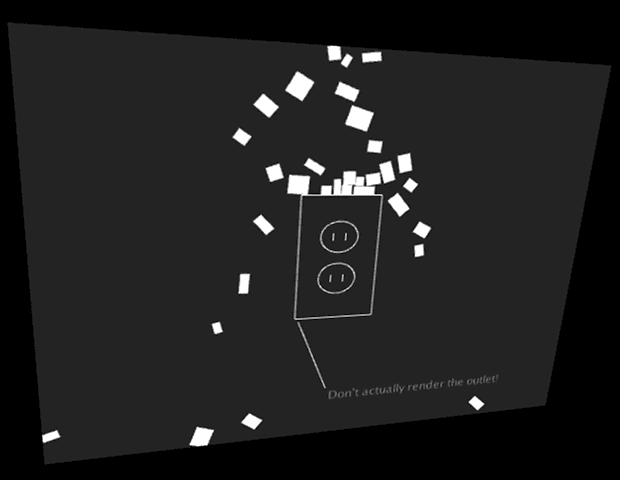

You will make a small poetic gesture projected on a wall. Look around and find a wall with some modest features that catch your eye — such as a power outlet, doorknob, water spigot, elevator buttons, etc. Sketch some concepts for some poetic ideas that could relate to those features. Now simplify your idea. Now simplify it again.

A note about the scale of this project.

You are asked to create the equivalent of a rhyming couplet, not an epic opera. Not even a limerick or a haiku. Just get some virtual objects responding to some wall features. Simplify, simplify, simplify.

Technical Overview

You will create an augmented projection using the physics library Box2D, that relates to a specific location. To ensure that your registration is good, you will projection-map it onto that location using the Keystone library.

Keystone is a video projection mapping library for Processing. It allows you to warp your Processing sketches onto any flat surface by using corner-pin keystoning, regardless of your projector’s position and orientation. In other words, it warps your graphics in order to compensate for the perspectival distortion that occurs when your projector is off-axis. To get started:

- Download Keystone from here: http://keystonep5.sourceforge.net/

- Install the Keystone library in the libraries folder in your Processing documents directory.

- Run the CornerPin example demo that comes with Keystone. Press ‘c’ to enter calibration mode, etc. (examine the keypressed() method in that example for more details.)

Box2D is a physics library for collisions between 2D shapes. You will be using the version of that library built for Processing, called PBox2D.

- Download the PBox2D library from here: http://www.shiffman.net/p5/libraries/pbox2d/pbox2d.zip

- Install the PBox2D library in the libraries folder in your Processing documents directory.

- For essential viewing: Browse and run all of the examples that come with PBox2D. Perhaps one of them will suit your concept well. Perhaps one of the examples will inspire an even better idea.

- To actually understand what you are doing, read Chapter 5 of Nature of Code, about Physics Libraries: http://natureofcode.com/book/chapter-5-physics-libraries/

- You will also be greatly helped if you watch this video of Dan Shiffman explaining how to use PBox2D: https://vimeo.com/60601613

Now, to execute the project:

- Find a location with some features to project on or around. Boring is better!

- Measure the wall features in order to obtain their accurate proportions and relative sizes. For example, I measured a wall outlet to be 70x115mm. When I rendered it using graphics, I made a rectangle which was 70×115 pixels. Get it?

- Design a simulation that relates to the wall features.

- Borrow a projector, an extension cord, and (if necessary) a magic arm with which to mount the projector. Also, make sure you have a video camera of some kind (your mobile phone is fine) to document the results .

- With your laptop, project your simulation onto the wall. Calibrate your projection using Keystone, so that everything lines up.

- Record some video. If necessary or appropriate, provide a little narration over the video to explain what’s going on. Upload your video to YouTube.

- In a blog post:

- Embed your YouTube Video.

- Describe your project, the problems you solved, and what you learned doing it.

- Discuss your inspirations (include sketches) and aspirations: how might the project develop further?

- Label your blog post with the category, Assignment-07-Projection.

Here’s some example code to get you started.

// This project renders PBox2D objects in a Keystone-warped world.

//-------------------------------------

// Adapted from CornerPin demo

import deadpixel.keystone.*;

Keystone ks;

PGraphics offscreen;

CornerPinSurface surface;

int offscreenW = 480;

int offscreenH = 360;

//-------------------------------------

// Adapted from Dan Shiffman's Basic example of falling rectangles

import pbox2d.*;

import org.jbox2d.collision.shapes.*;

import org.jbox2d.common.*;

import org.jbox2d.dynamics.*;

// A reference to our box2d world

PBox2D box2d;

// Objects in our world

ArrayList boxes;

WallOutlet myOutlet;

//============================================================

void setup() {

size(640, 480, P3D);

//--------------------------------

// Initialize the Keystone offscreen buffer etc.

ks = new Keystone(this);

surface = ks.createCornerPinSurface(offscreenW, offscreenH, 20);

offscreen = createGraphics(offscreenW, offscreenH, P3D);

//--------------------------------

// Initialize box2d physics and create a world with gravity.

box2d = new PBox2D(this);

box2d.createWorld();

box2d.setGravity(0, -10);

boxes = new ArrayList ();

myOutlet = new WallOutlet (70, 115, offscreenW/2, offscreenH/2);

}

void draw() {

addNewBoxes();

deleteOldBoxes();

updateTheSimulation();

// Draw the scene, offscreen

offscreen.beginDraw();

offscreen.background(50);

displayTheSimulation();

offscreen.endDraw();

// Render the perspective-warped scene

background(0);

surface.render(offscreen);

}

//-------------------------------------

void addNewBoxes() {

// Boxes fall from the top every so often

if (random(1) < 0.1) {

Box p = new Box ( offscreenW/2 + random(-20, 20), -20);

boxes.add(p);

}

}

//-------------------------------------

void deleteOldBoxes() {

// Boxes that leave the screen, we delete them

// (note they have to be deleted from both the box2d world and our list

for (int i = boxes.size()-1; i >= 0; i--) {

Box b = boxes.get(i);

if (b.done()) {

boxes.remove(i);

}

}

}

//-------------------------------------

void updateTheSimulation() {

// We must always step through time!

box2d.step();

}

//-------------------------------------

void displayTheSimulation() {

// Display all the objects

for (Box b: boxes) {

b.display();

}

myOutlet.display();

}

//-------------------------------------

void keyPressed() {

ks.toggleCalibration();

}

//============================================================

// Class for a rectangular box

class Box {

// We need to keep track of a Body, and a width and height

Body body;

float w;

float h;

Box (float x, float y) {

w = random (6, 20);

h = random (6, 20);

// Add the box to the box2d world

makeBody (new Vec2(x, y), w, h);

}

// This function removes the particle from the box2d world

void killBody() {

box2d.destroyBody(body);

}

void applyForce (Vec2 v) {

body.applyForce(v, body.getWorldCenter());

}

boolean done() {

// Is the particle ready for deletion?

// Let's find the screen position of the particle

Vec2 pos = box2d.getBodyPixelCoord(body);

// Is it off the bottom of the screen?

if (pos.y > height+w*h) {

killBody();

return true;

}

return false;

}

// Drawing the box.

// Notice how we do all the drawing in Keystone's offscreen buffer!

void display() {

// We look at each body and get its screen position

Vec2 pos = box2d.getBodyPixelCoord(body);

// Get its angle of rotation

float a = body.getAngle();

offscreen.rectMode(CENTER);

offscreen.pushMatrix();

offscreen.translate(pos.x, pos.y);

offscreen.rotate(-a);

offscreen.fill(255);

offscreen.noStroke();

offscreen.rect(0, 0, w, h);

offscreen.popMatrix();

}

// This function adds the rectangle to the box2d world

void makeBody (Vec2 center, float w_, float h_) {

// Define a polygon (this is what we use for a rectangle)

PolygonShape sd = new PolygonShape();

float box2dW = box2d.scalarPixelsToWorld(w_/2);

float box2dH = box2d.scalarPixelsToWorld(h_/2);

sd.setAsBox(box2dW, box2dH);

// Define a fixture

FixtureDef fd = new FixtureDef();

fd.shape = sd;

// Parameters that affect physics, fun to mess with

fd.density = 1;

fd.friction = 0.3;

fd.restitution = 0.5;

// Define the body and make it from the shape

BodyDef bd = new BodyDef();

bd.type = BodyType.DYNAMIC;

bd.position.set(box2d.coordPixelsToWorld (center));

body = box2d.createBody(bd);

body.createFixture(fd);

// Give it some initial random velocity

body.setLinearVelocity(new Vec2(random(-1, 1), random(1, 1)));

body.setAngularVelocity(random(-5, 5));

}

}

//============================================================

class WallOutlet {

// We need to keep track of a Body and a radius

Body body;

float w;

float h;

WallOutlet (float w_, float h_, float x, float y) {

w = w_;

h = h_;

// Define a polygon (this is what we use for a rectangle)

PolygonShape sd = new PolygonShape();

float box2dW = box2d.scalarPixelsToWorld(w/2);

float box2dH = box2d.scalarPixelsToWorld(h/2);

sd.setAsBox(box2dW, box2dH);

// Define a fixture

FixtureDef fd = new FixtureDef();

fd.shape = sd;

// Define the body and make it from the shape

BodyDef bd = new BodyDef();

bd.type = BodyType.STATIC;

bd.position = box2d.coordPixelsToWorld(x, y);

body = box2d.world.createBody(bd);

body.createFixture(fd);

}

// Draw the WallOutlet.

// Notice how we do all the drawing in Keystone's offscreen buffer!

void display () {

Vec2 pos = box2d.getBodyPixelCoord(body);

offscreen.rectMode(CENTER);

offscreen.noFill();

offscreen.stroke(255);

offscreen.rect(pos.x, pos.y, w, h);

offscreen.ellipse (pos.x, pos.y-h*0.17, w*0.47, h*0.25);

offscreen.ellipse (pos.x, pos.y+h*0.17, w*0.47, h*0.25);

offscreen.line (pos.x-w*0.08, pos.y-h*0.20, pos.x-w*0.08, pos.y-h*0.14);

offscreen.line (pos.x+w*0.08, pos.y-h*0.20, pos.x+w*0.08, pos.y-h*0.14);

offscreen.line (pos.x-w*0.08, pos.y+h*0.20, pos.x-w*0.08, pos.y+h*0.14);

offscreen.line (pos.x+w*0.08, pos.y+h*0.20, pos.x+w*0.08, pos.y+h*0.14);

offscreen.fill (180);

offscreen.text ("Don't actually render the outlet!", pos.x, pos.y + h*1.25);

offscreen.line (pos.x - 3, pos.y + h*1.25 - 12, pos.x - w/2 + 3, pos.y + h/2 + 3);

}

}