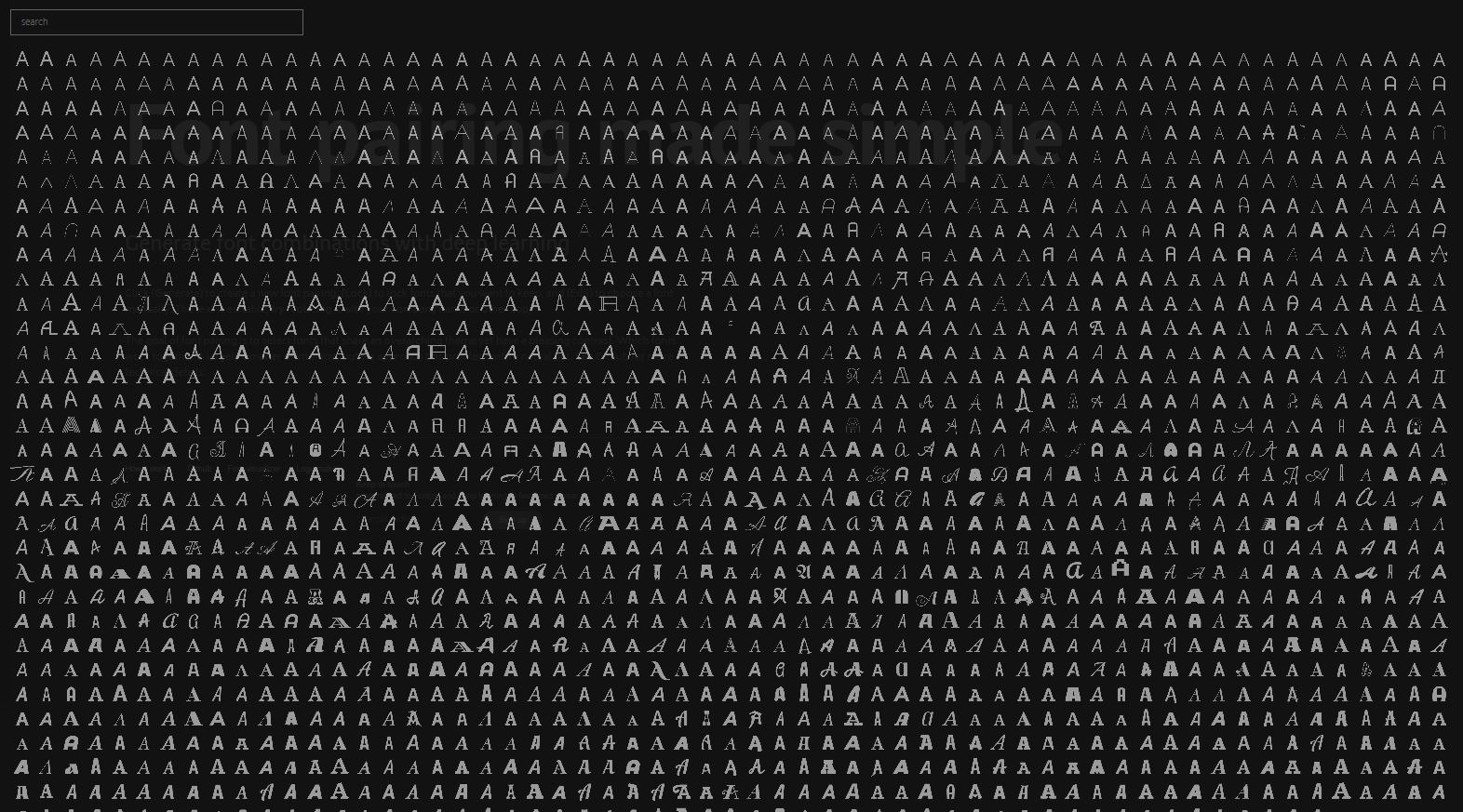

Okay, where was this when I lived and breathed graphic design? The project I’ve chosen to highlight is titled Fontjoy, by Jack Quiao, which generates font pairings. Its aim is to select pairs of fonts that have distinct aesthetics, but share similar enough qualities for them to be functional as a font pair. I don’t quite understand how it all works, but the fact that there’s a neural net out there that has fonts categorized on a scale of most to least similar warms my heart, if not for any reason other than that someone has made the thing that I’ve very much wished existed every time I go to select fonts on a document.

Use the tool here.

Daniel Ambrosi, Infinite Dreams (2020).

Daniel Ambrosi, Infinite Dreams (2020).