Béatrice Lartigue

Béatrice Lartigue is a designer and artist who works in the area of interactivity and the relationship between space and time. Her interest in this area stemmed from her childhood love of comic books, where she first began to draw the lines between how each panel was a visual representation of space and time.

She is also a member of Lab 212 collective, a group of friends who graduated from the School of Visual Art les Gobelins in Paris, and studied Interactive Design. The interdisciplinary art collective works on pushing the boundaries on what can be defined as a visualization in our daily lives.

I am attracted to Latrigue’s elegant interactions and sophisticated visualizations, especially in her work related to light and sound. Additionally, I believe her style of dark surroundings filled with crisp blue light is very similar to the aesthetic that I have been working towards for some time.

Lartigue is also passionate about the realtime visualization of sound and music. In her work Portée/ she worked with her colleagues from Lab 212 to create a minimalist music interaction. When the audience plucks a string, it plays the corresponding note on the connected piano. This work reminds me of previous project I’ve discovered this semester, particularly along the theme of the necessity of collaboration. Much like 21 Balançoires , while one could simply swing, or in the instance of Portée/, pluck, alone and create a beautiful note, the true magic occurs when many come together to participate. In order to create the art you need others around you. Whether they are strangers or friends is irrelevant, because in that moment you are all simply a note, coming together to create a melody.

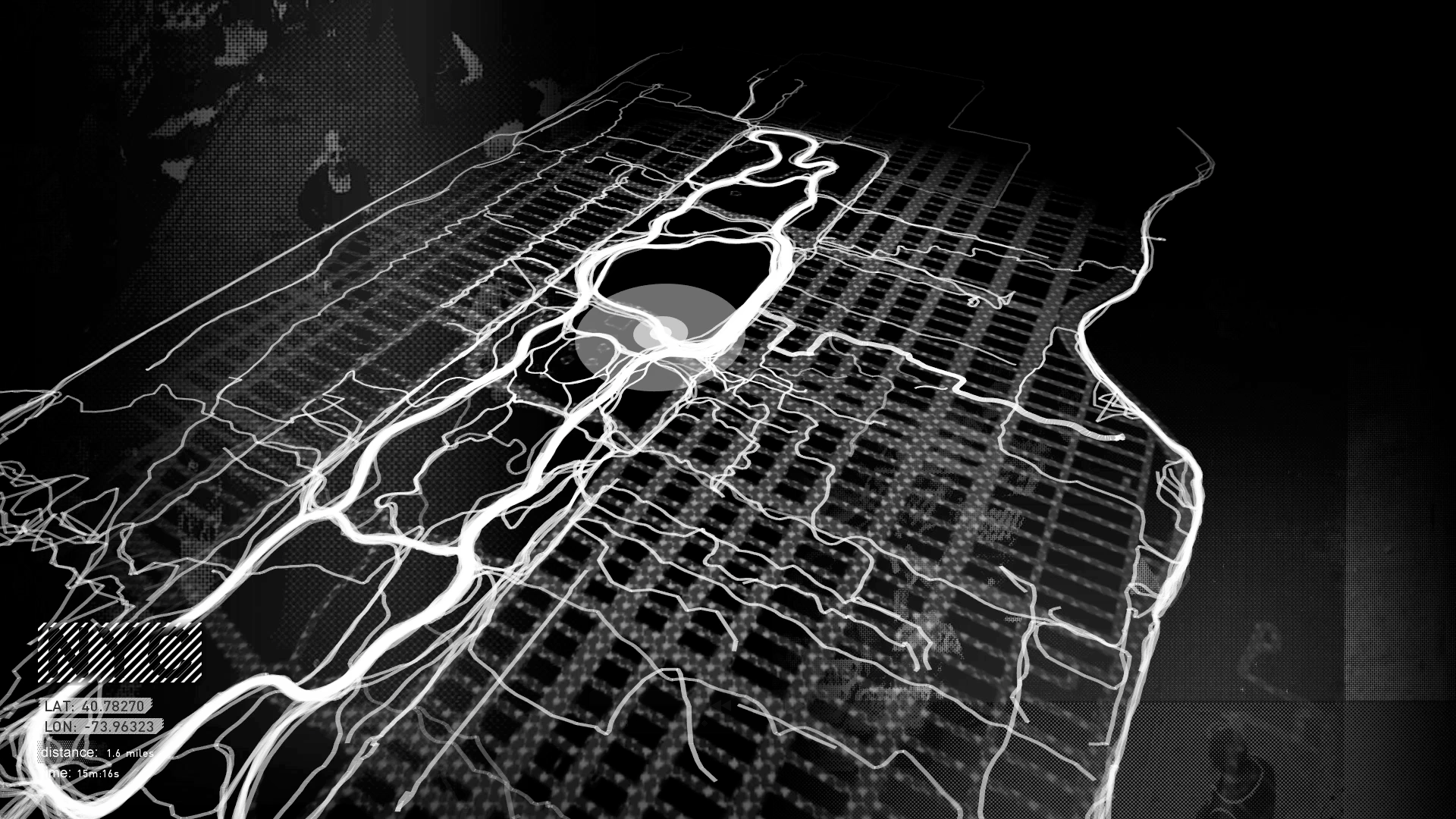

I also adore her work as a VR art director in Notes on Blindness: Into Darkness. In this interpretation of the audio-diary cassettes of John Hull, the user can only see what the user can here. Nothing is visible until sound touches it, which is exactly how Hull describes the world around him. His description or rain is breathtaking, as he describes that only when it rains can he truly see an environment, rather than pieces, here and there. He wishes that it could rain indoors, so that he could see his home the way he can see trees, pavement, and gutters. In Latrigue’s work the user truly feels deep empathy for Hull, and his world that is entirely dependent on sound. After watching the original film Notes on Blindness, I felt that this interpretation fell short, and that the VR expression is much more elegant in its method of visualization and storytelling.

While reading All the Light we Cannot See by Anthony Doerr (read: my favourite book), I was completely invested in his description of how a little blind girl “saw” the world around her in the 1940’s. I feel that Doerr and Latrigue both do an exceptional job at describing a world for someone one had, but has now lost their vision. In Doerr’s work the girl’s story is told in parallel of that of a little boy’s (with normal vision). The two childrens’ paths cross very briefly, but they have a significant impact on each other. I think that the VR experience that Latrigue created would be a fascinating way to tell Doerr’s story, in particular I would be interested to see how the little boy’s world would differ from the girl’s, and what would happen when they meet.