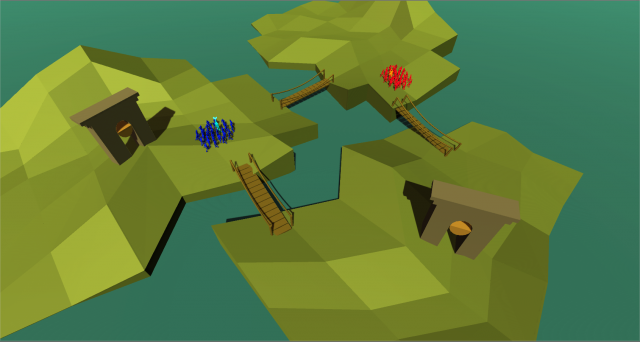

Together Again

Click here to play. Click here to see the GitHub repo.

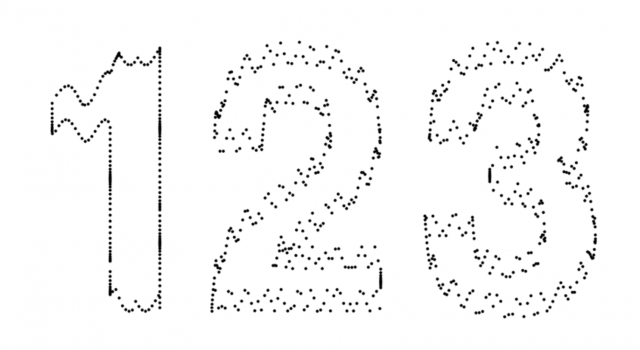

Do you ever wish you could turn back time? Fix what you’ve broken, see those you’ve lost? Together Again is a simple game with a unique mechanic; the goal is to reunite the square and the circle, make them one and the same. Simply use your mouse to tap or bounce the falling square towards the circle. The faster you hit the square, the harder the hit and faster the motion of the square. As the levels progress, gravity becomes stronger, and it gets harder and harder to be together again. Therefore, your wish has come true and you can travel back in time to fix your mistakes, plan out your actions through trial and error, and hopefully succeed. Your past attempts show as faded versions in the background, and the more you fail, the more crowded and distracting and harder it will get to succeed. There is no game over. There is just time.

This game is related to my previous project, hizlik-Book (Year One) in that this game is about the unexpected and unbelievable event that is a breakup that occurred during the time of the printing of the book. However, like the book, the correlation of my projects to my personal life is left ambiguous and often unnoticed. Specifically, the square represents a male figure, the circle a female figure, and the color green is her favorite color.

The game was created in p5.js, with the code provided below.

var c; // canvas

var cwidth = window.innerWidth;

var cheight = window.innerHeight;

var nervous; var biko; // fonts

var gravity = 0.3;

var mouseBuffer = -3;

var bounce = -0.6;

var p2mouse = [];

var boxSize = 50;

var gameState = "menu";

var vizState = "static";

var transitionVal = 0;

var level = 1;

var boxState = "forward";

var offscreen = false;

var offscreenCounter = 0;

var keyWasDown = false;

var gameCounter = 0;

var currBox = null;

var currCirc = null;

var ghosts = [];

function setup() {

c = createCanvas(cwidth, cheight);

background(255);

frameRate(30);

noCursor();

nervous = loadFont("Nervous.ttf");

biko = loadFont("Biko_Regular.otf");

}

window.onresize = function() {

cwidth = window.innerWidth;

cheight = window.innerHeight;

c.size(cwidth, cheight);

}

function draw() {

background(255);

// splash menu

if(gameState == "menu") {

noStroke();

fill(121,151,73)

textFont(nervous);

textSize(min(cwidth,cheight)*.1);

textAlign(CENTER,CENTER);

text("Together Again", cwidth/2, cheight/2);

fill(218,225,213);

textFont(biko);

textSize(min(cwidth,cheight)*.03);

text("hold SPACE to go turn back time", cwidth/2, cheight-cheight/5);

if(keyIsDown(32)) { vizState = "transition"; }

if(keyIsDown(68)) { gravity = 1; }

if(vizState == "transition") {

transitionVal += 10;

fill(255,255,255,transitionVal);

rect(0,0,cwidth,cheight);

if(transitionVal>255) {

gameState = "game";

}

}

}

// actual game

if(gameState == "game") {

if(currBox == null) currBox = new Box(null);

if(currCirc == null) currCirc = new Circle();

if(vizState == "transition") {

currBox.draw();

currCirc.draw();

transitionVal -= 10;

fill(255,255,255,transitionVal);

rect(0,0,cwidth,cheight);

if(transitionVal < 0 && !keyIsDown(32)) {

vizState = "static";

}

}

else {

// check if space is being pressed

if(keyIsDown(32)) {

boxState = "rewind"

if(!keyWasDown) {

ghosts.push(currBox);

keyWasDown = true;

}

}

else if(keyWasDown) {

keyWasDown = false;

boxState = "forward";

var prevCount = 1;

var prev = null;

while(prev == null) {

// console.log(ghosts.length-prevCount);

prev = ghosts[ghosts.length-prevCount].getCurrPos();

prevCount++;

if(prevCount > ghosts.length) {

for(var i=0; i 255) {

console.log("new level");

level++;

gravity = constrain(gravity+.2, 0, 3);

currBox = new Box([random(cwidth-boxSize), random(cheight/2), 0, 0]);

// currBox = new Box(null);

currCirc = new Circle();

ghosts = [];

vizState = "transition";

boxState = "forward"

transitionVal = 255;

}

else if(transitionVal-150 > 150) {

currBox.draw();

currCirc.draw();

fill(255,255,255,transitionVal-150);

rect(0,0,cwidth,cheight);

}

else {

currBox.draw();

currCirc.draw();

}

}

else if(boxState == "rewind") {

gameCounter--;

if(gameCounter>0) {

for(var i=0; i= 0) { this.vy += constrain(vm, -40, 40); }

else { this.vy *= bounce; }

this.pos[1] = mouseY+mouseBuffer;

}

// ========== UPDATE HORIZONTAL ========== //

var hpos = map(mouseX, this.pos[0], this.pos[0]+boxSize, -1, 1);

this.vx += 10 * bounce * hpos;

}

// update horizontal bounce

if (collision != null && (collision[2]=="left" || collision[2]=="right")) {

var vm = (mouseX - pmouseX); // velocity of the mouse in x direction

this.vx += constrain(vm, -40, 40);

if(collision[2] == "left") {

if(this.vx > 0) { this.pos[0] = mouseX; }

else { this.vx *= bounce; }

this.pos[0] = mouseX;

}

if(collision[2] == "right") {

if(this.vx < 0) { this.pos[0] = mouseX-boxSize; }

else { this.vx *= bounce; }

this.pos[0] = mouseX-boxSize;

}

}

// update position

if(this.vx > 20*gravity || this.vx < -20*gravity) { this.vx *= 0.85; }

if(this.vy > 35*gravity || this.vy < -35*gravity) { this.vy *= 0.85; }

this.vy = constrain(this.vy + gravity, -30, 50);

this.pos[0] += this.vx;

this.pos[1] += this.vy;

//debug

if(this.pos[1]-boxSize>cheight || this.pos[0]+boxSize<0 || this.pos[0]>cwidth) {

offscreen = true;

}

this.history.push([this.pos[0], this.pos[1], this.vx, this.vy]);

this.currIndex++;

}

this.draw = function() {

noStroke();

fill(57,67,7);

if(!this.active)

fill(239,240,235);

if(this.currIndex >= 0 && this.currIndex < this.history.length) {

if(boxState == "reunited") {

this.corner = constrain(this.corner+1, 0, 18);

var r = map(this.corner, 0, 18, 57, 121);

var g = map(this.corner, 0, 18, 67, 151);

var b = map(this.corner, 0, 18, 7, 73);

fill(r,g,b);

}

rect(this.history[this.currIndex][0], this.history[this.currIndex][1], boxSize, boxSize, this.corner);

}

}

this.getMouseCollisionPoint = function() {

var top = new Line(this.pos[0],this.pos[1],this.pos[0]+boxSize,this.pos[1])

var left = new Line(this.pos[0],this.pos[1],this.pos[0],this.pos[1]+boxSize)

var bottom = new Line(this.pos[0],this.pos[1]+boxSize,this.pos[0]+boxSize,this.pos[1]+boxSize)

var right = new Line(this.pos[0]+boxSize,this.pos[1],this.pos[0]+boxSize,this.pos[1]+boxSize)

var mouse = new Line(mouseX, mouseY+mouseBuffer, pmouseX, pmouseY+mouseBuffer);

var coords = null;

if(pmouseX <= mouseX) {

var result = getMouseCollision(mouse, left);

if(result != null) {

result.push("left");

return result;

}

}

if(pmouseX >= mouseX) {

var result = getMouseCollision(mouse, right);

if(result != null) {

result.push("right");

return result;

}

}

if(pmouseY <= mouseY) {

var result = getMouseCollision(mouse, top);

if(result != null) {

result.push("top");

return result;

}

}

if(pmouseY >= mouseY){

var result = getMouseCollision(mouse, bottom);

if(result != null) {

result.push("bottom");

return result;

}

}

if(this.vx < 0 &&

mouseX >= this.pos[0] &&

pmouseX < this.pos[0]-this.vx &&

mouseY+mouseBuffer >= this.pos[1] &&

mouseY+mouseBuffer <= this.pos[1]+boxSize) {

return [mouseX, mouseY+mouseBuffer, "left"];

}

if(this.vx > 0 &&

mouseX <= this.pos[0]+boxSize &&

pmouseX > this.pos[0]+boxSize-this.vx &&

mouseY+mouseBuffer >= this.pos[1] &&

mouseY+mouseBuffer <= this.pos[1]+boxSize) {

return [mouseX, mouseY+mouseBuffer, "right"];

}

if(this.vy < 0 &&

mouseY+mouseBuffer >= this.pos[1] &&

pmouseY+mouseBuffer < this.pos[1]+this.vy &&

mouseX >= this.pos[0] &&

mouseX <= this.pos[0]+boxSize) {

return [mouseX, mouseY+mouseBuffer, "top"];

}

if(this.vy > 0 &&

mouseY+mouseBuffer <= this.pos[1]+boxSize &&

pmouseY+mouseBuffer >= this.pos[1]+boxSize-this.vy &&

mouseX >= this.pos[0] &&

mouseX <= this.pos[0]+boxSize) {

return [mouseX, mouseY+mouseBuffer, "bottom"];

}

return null;

}

this.rewind = function() {

this.currIndex--;

this.active = false;

}

this.init(startVals);

}

function Circle() {

this.pos = [];

this.ring = 6;

this.corner = 25; //18 mid-point

this.init = function() {

this.pos = [random(cwidth), random(cheight)];

}

this.pulse = function() {

this.ring-= .2;

if(this.ring<0.5 && boxState != "reunited") {

this.ring = 6;

}

else if(this.ring<0 && boxState == "reunited") {

this.ring = 0;

}

}

this.draw = function() {

this.pulse();

fill(176, 196, 134);

strokeWeight(this.ring);

stroke(239,240,235);

// ellipse(this.pos[0], this.pos[1], boxSize, boxSize);

if(boxState == "reunited") {

noStroke();

this.corner = constrain(this.corner-1, 18, 25);

var r = map(this.corner, 18, 25, 121, 176);

var g = map(this.corner, 18, 25, 151, 196);

var b = map(this.corner, 18, 25, 73, 235);

fill(r,g,b);

}

rect(this.pos[0]-boxSize/2, this.pos[1]-boxSize/2, boxSize, boxSize, this.corner)

}

this.init();

}

function lostMessage() {

noStroke();

fill(218,225,213);

textFont(nervous);

textSize(min(cwidth,cheight)*.08);

text("lost your way", cwidth/2, cheight/2);

textFont(biko);

var sec = "seconds";

textSize(min(cwidth,cheight)*.05);

if(round(offscreenCounter/30)==1) sec = "second";

text("for "+round(offscreenCounter/30)+" "+sec, cwidth/2, cheight/2+cheight/10);

textSize(min(cwidth,cheight)*.03);

text("hold SPACE to go turn back time", cwidth/2, cheight-cheight/5);

}

function getMouseCollision(a, b) {

var coord = null;

var de = ((b.y2-b.y1)*(a.x2-a.x1))-((b.x2-b.x1)*(a.y2-a.y1));

var ua = (((b.x2-b.x1)*(a.y1-b.y1))-((b.y2-b.y1)*(a.x1-b.x1))) / de;

var ub = (((a.x2-a.x1)*(a.y1-b.y1))-((a.y2-a.y1)*(a.x1-b.x1))) / de;

if((ua > 0) && (ua < 1) && (ub > 0) && (ub < 1)) {

var x = a.x1 + (ua * (a.x2-a.x1));

var y = a.y1 + (ua * (a.y2-a.y1));

coord = [x, y];

}

return coord;

}

function Line(x1, y1, x2, y2) {

this.x1 = x1;

this.y1 = y1;

this.x2 = x2;

this.y2 = y2;

}

function areReunited(box, circle) {

var distX = Math.abs(circle.pos[0] - box.pos[0] - boxSize / 2);

var distY = Math.abs(circle.pos[1] - box.pos[1] - boxSize / 2);

if (distX > (boxSize / 2 + boxSize/2)) {

return false;

}

if (distY > (boxSize / 2 + boxSize/2)) {

return false;

}

if (distX <= (boxSize / 2)) {

return true;

}

if (distY <= (boxSize / 2)) {

return true;

}

var dx = distX - boxSize / 2;

var dy = distY - boxSize / 2;

return (dx * dx + dy * dy <= (boxSize/2 * boxSize));

}

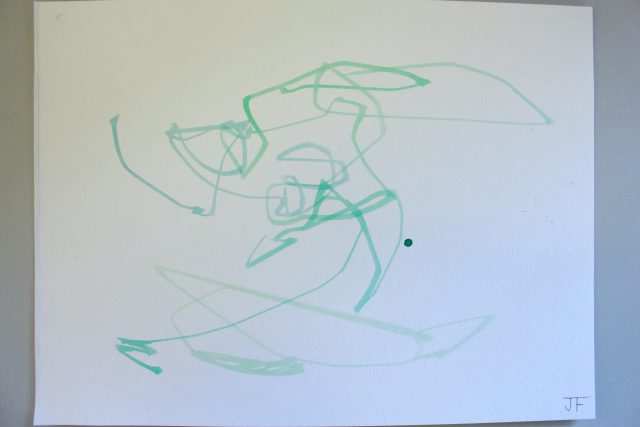

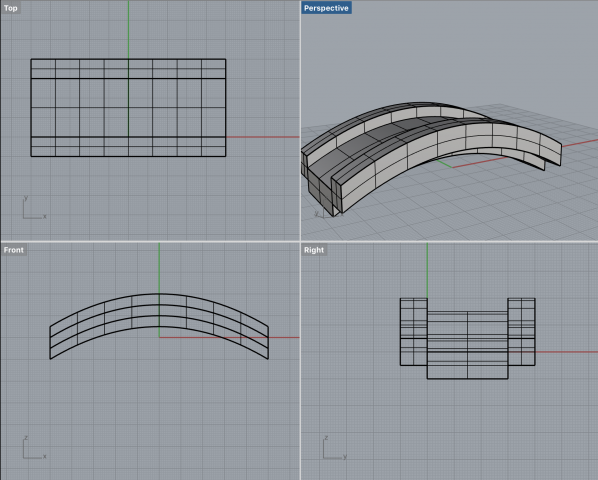

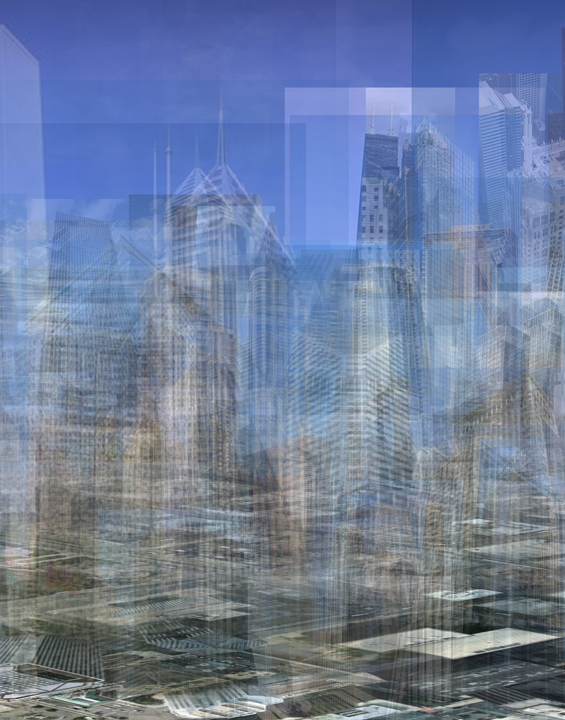

Inside Social Soul

Inside Social Soul

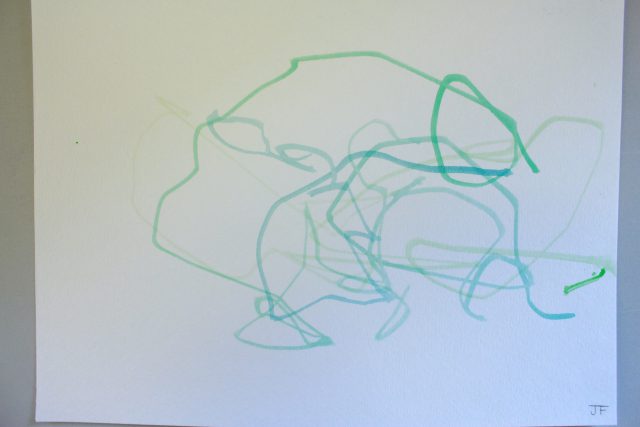

Inside Social Soul (Perspective 2)

Inside Social Soul (Perspective 2)

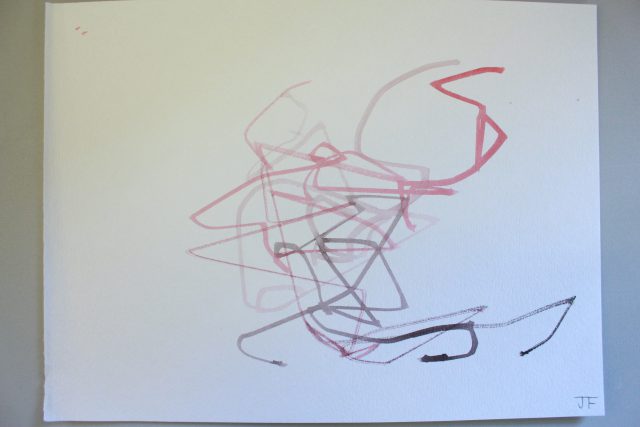

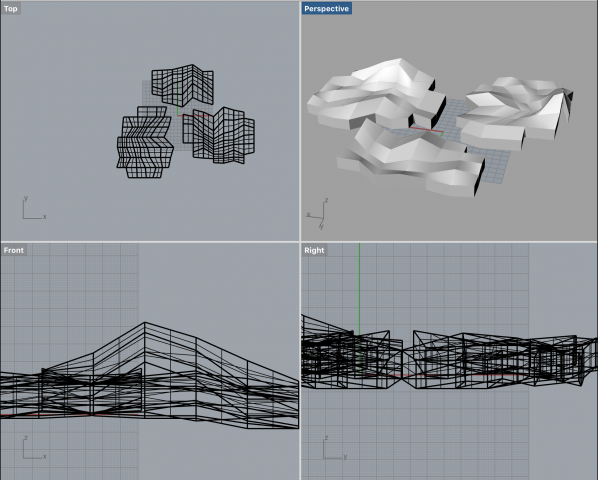

The Loop Suite

The Loop Suite

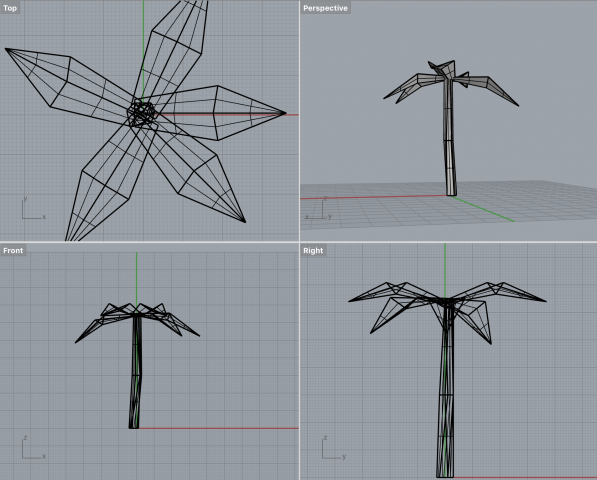

Kids with Santa

Kids with Santa

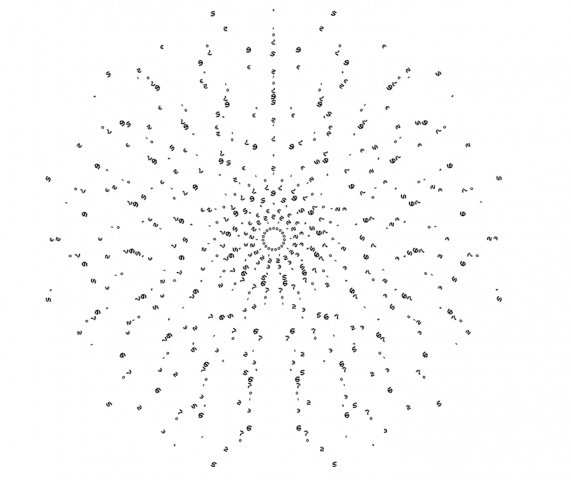

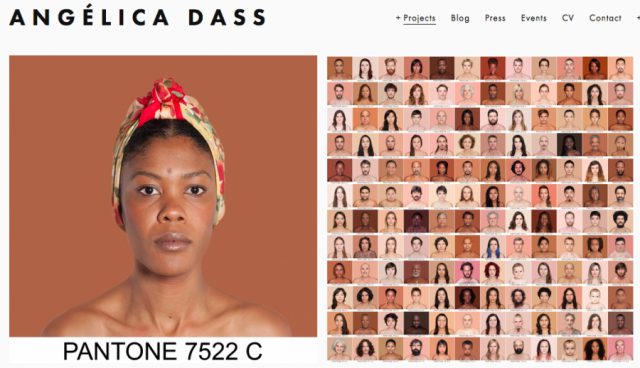

A visual study from the artist on coherence, plausibility, and shape.

A visual study from the artist on coherence, plausibility, and shape.

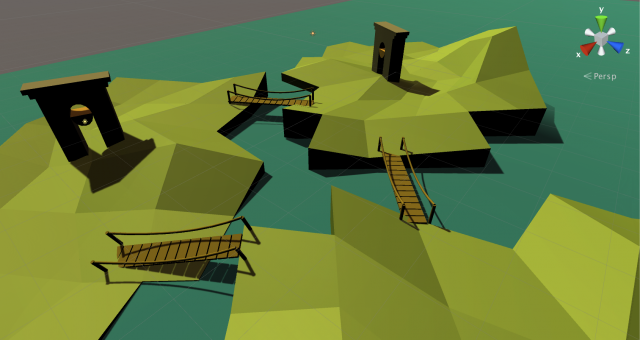

Countries represented by their wellness components.

Countries represented by their wellness components.

The episode SpongeHinge. Not the monument Stonehinge. Honest mistake.

The episode SpongeHinge. Not the monument Stonehinge. Honest mistake.