This assignment has three parts: Some readings, a Looking Outwards, and a software project. Please note that these deliverables have different due dates:

- Part A. Reading-Response #08: Two Readings about Things, due Monday 11/14

- Part B. Looking Outwards #08: On Physical Computing, due Monday 11/14

- Part C. Software for a Skeleton (Computation + Mocap), due Friday 11/11

- Ten Creative Opportunities

- Technical Options & Links

- Summary of Deliverables

Part A. Reading-Response #08: Two Readings about Things

This is intended as a very brief reading/response assignment, whose purpose is to introduce some vocabulary and perspective on “critical making” and the “internet of things”. You are asked to read two very brief statements.

Due Monday, November 14.

Please read the following one-page excerpt from Bruce Sterling’s “Epic Struggle for the Internet of Things”:

Please (also) read the one-page “Critical Engineering Manifesto” (2011) by Julian Oliver, Gordan Savičić, and Danja Vasiliev. Now,

- Select one of the tenets of the manifesto that you find interesting.

- In a brief blog post of 100-150 words, re-explain it in your own words, and explain what you found interesting about it. If possible, provide an example, real or hypothetical, which illustrates the proposition.

- Label your blog post with the Category, ManifestoReading, and title it nickname-manifesto.

Part B. Looking Outwards #08: Physical Computing

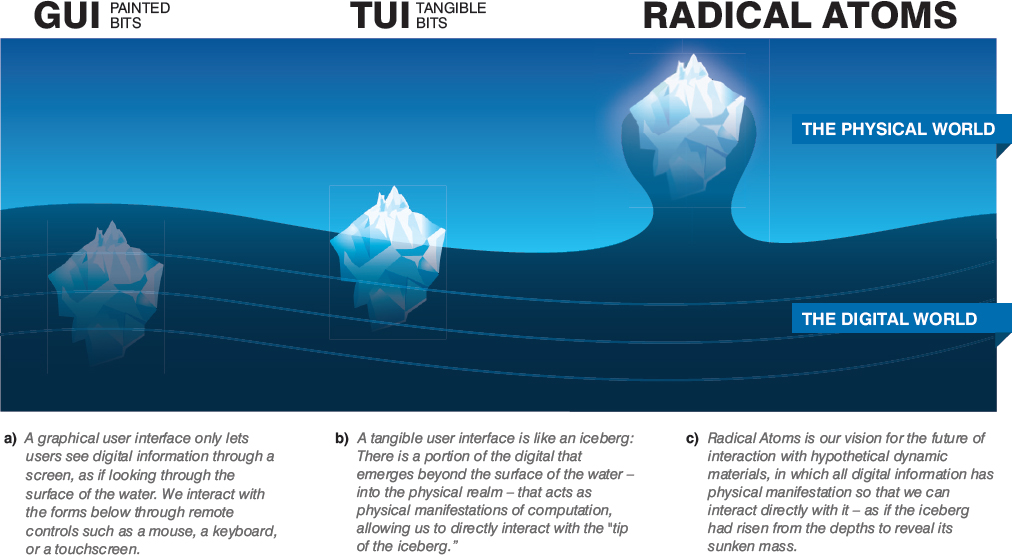

This LookingOutwards assignment is concerned with physical computing and tangible interaction design. As part of this Looking Outwards, you are strongly strongly encouraged to attend the public lecture of Hiroshi Ishii on Thursday, November 10 at 5pm in McConomy Auditorium. (Chinese food will be served afterwards in the STUDIO.)

Due Monday, November 14.

Here are some links you are welcome to explore for your Looking Outwards assignment:

Physical computing projects:

Arduino (specific) projects:

Please categorize your Looking Outwards with the WordPress Category, LookingOutwards08, and title your blog post nickname-lookingoutwards08.

Part C. Software for a Skeleton

For this project, you are asked to write software which

creatively interprets, or responds to, the actions of the body.

You will develop a computational treatment for motion-capture data. Ideally, both your treatment, and your motion-capture data, will be ‘tightly coupled’ to each other: The treatment will be designed for specific motion-capture data, and the motion-capture data will be intentionally selected or performed for your specific treatment.

Code templates for Processing, three.js and openFrameworks are here.

Due Friday, November 11.

Ten Creative Opportunities

It’s important to emphasize that you have a multitude of creative options — well beyond, or alternative to, the initial concept of a “decorated skeleton”. The following ten suggestions, which are by no means comprehensive, are intended to prompt you to appreciate the breadth of the conceptual space you may explore. In all cases, be prepared to justify your decisions.

- You may work in real-time (interactive), or off-line (animation). You may choose to develop a piece of interactive real-time software, which treats the mocap file as a proxy for data from a live user (as in Setsuyakurotaki, by Zach Lieberman + Rhizomatiks, shown above in use by live DJs). Or you may choose to develop a piece of custom animation software, which interprets the mocap file as an input to a lengthy rendering process process (as in Universal Everything’s Walking City, or Method Studios’ AICP Sponsor Reel).

- You may use more than one body. Your software doesn’t have to be limited to just one body. Instead, it could visualize the relationship (or create a relationship) between two or more bodies (as in Scott Snibbe’s Boundary Functions or ). It could visualize or respond to a duet, trio or crowd of people.

- You may focus on just part of the body. Your software doesn’t need to respond to the entire body; it could focus on interpreting just a single part of the body (as in Theo Watson & Emily Gobeille’s prototype for Puppet Parade, which responds to a single arm).

- You may focus on how an environment is affected by the body. Your software doesn’t have to re-skin or visualize the body. Instead, you can develop an environment that is affected by the movements of the body (as in Theo & Emily’s Weather Worlds).

- You may position your ‘camera’ anywhere — including a first-person POV, or with a (user-driven) VR POV. Just because your performance was recorded from a sensor “in front” of you, this does not mean your mocap data must be viewed from the same point of view. Consider displaying your figure in the round, from above, below, or even from the POV of the body itself. (Check out the camera() function in Processing, or the PerspectiveCamera object in three.js, for more ideas. If you’re using three.js, you could also try a WebVR build for Google cardboard.)

- You may work in 3D or 2D. Although your mocap data represents three-dimensional coordinates, you don’t have to make a 3D scene; for example, you could use your mocap to control an assemblage of 2D shapes. You could even use your body to control two-dimensional typography. (Helpful Processing commands like screenX() and screenY() , or unprojectVector() in three.js, allow you to easily compute the 2D coordinates of a perspectivally-projected 3D point.)

- You may control the behavior of something non-human. Just because your data was captured from a human, doesn’t mean you must control a human. Consider using your mocap data to puppeteer an animal, monster, plant, or even a non-living object (as in this research on “animating non-humanoid characters with human motion data” from Disney Research).

- You may record mocap data yourself, or you can use data from an online source. If you’re recording the data yourself, feel free to record a friend who is a performer — perhaps a musician, actor, or athlete. Alternatively, feel free to use data from an online archive or commercial vendor. You may also combine these different sources; for example, you could combine your own awkward performance, with a group of professional backup dancers.

- You can make software which is analytic or expressive. You are asked to make a piece of software which interprets the actions of the human body. While some of your peers may choose to develop a character animation or interactive software mirror, you might instead elect to create “information visualization” software that presents an analysis of the body’s joints over time. Your software could present comparisons different people making similar movements, or could track the accelerations of movements by a violinist.

- You may use sound. Feel free to play back sound which is synchronized with your motion capture files. This might be the performer’s speech, or music to which they are dancing, etc. (Check out the Processing Sound Library to play simple sounds, or the PositionalAudio class in three.js, which has the ability to play sounds using 3D-spatialization.)

Technical Options & Resources

Code templates for loading and displaying motion capture files in the BVH format have been provided for you in Processing (Java), openFrameworks (C++), and three.js (a JavaScript library for high-quality OpenGL 3D graphics in the browser). You can find these templates here: https://github.com/CreativeInquiry/BVH-Examples.

As an alternative to the above, you are permitted to use Maya (with its internal Python scripting language), or Unity3D for this project. Kindly note, however, that the professor and TA cannot support these alternative environments. If you use them, you should be prepared to work independently. For Python in Maya, please this tutorial, this tutorial, and this video.

For this project, it is assumed that you will record or reuse a motion capture file in the BVH format. (If you are working in Maya or Unity, you may prefer to use the FBX format.) We have purchased a copy of Brekel Pro Body v2 for you to use to record motion capture files, and we have installed it on a PC in the STUDIO; it can record Kinect v2 data into these various mocap formats.

Our Three.js demo (included in BVH example code):

Our Processing demo (included in BVH example code):

Summary of Deliverables

Here’s what’s expected for this assignment.

- Review some of the treatments of motion-capture data which people have developed, whether for realtime interactions or for offline animations, in our lecture notes from Friday 11/4.

- Sketch first! Draw some ideas.

- Make or find a motion capture recording. Be sure to record a couple takes. Keep in mind that you may wish to re-record your performance later, once your software is finished.

- Develop a program that creatively interprets, or responds to, the changing performance of a body as recorded in your motion-capture data. (If you feel like trying three.js, check out their demos and examples.)

- Create a blog post on this site to hold the media below.

- Title your blog post, nickname-mocap, and give your blog post the WordPress Category, Mocap.

- Write a narrative of 150-200 words describing your development process, and evaluating your results. Include some information about your inspirations, if any.

- Embed a screengrabbed video of your software running (if it is designed to run in realtime). If your software runs “offline” (non-realtime), as in an animation, render out a video and embed that.

- Upload an animated GIF of your software. It can be brief (3-5 seconds).

- Upload a still image of your software.

- Upload some photos or scans of your notebook sketches.

- Embed your code (using the WP-Syntax WordPress plugin to format your JavaScript and/or other code), and include a link to your code on Github.

- Test your blog post to make sure that all of the above embedded media appear correctly. If you’re having a problem, ask for help.

Good luck!

The episode SpongeHinge. Not the monument Stonehinge. Honest mistake.

The episode SpongeHinge. Not the monument Stonehinge. Honest mistake.