Jaqaur – Last Project

Motion Tracer

For my last project (no more projects–it’s so sad to think about), I decided to combine aspects from two previous ones: the motion capture project and the plotter project. For my plotter project, I had used a paintbrush with the Axidraw instead of a pen, and I really liked the result, but the biggest criticism I got was that the content itself (binary trees) was not very compelling. So, for this project, I chose to paint more interesting material: motion over time.

I came up with the idea to trace the paths of various body parts pretty early, but it wasn’t until I recorded BVH data and wrote some sample code that I could determine how many and which body parts to trace. Originally, I had thought that tracing the hands, feet, elbows, knees, and mid-back would make for a good, somewhat “legible” image, but as Golan and literally everyone else I talked to told me: less is more. So, I ultimately decided to trace only the hands and the feet. This makes the images a bit harder to decipher (as far as figuring out what the movement was), but they look better, and I guess that’s the point.

One more change I made from my old project was the addition of multiple colors. Golan advised me against this, but I elected to completely ignore him, and I really like how the multi-colored images turned out. I mixed different watercolors (my first time using watercolors since middle school art class) in a tray, and put those coordinates into my code. I added instructions between each line of color for the Axidraw to go dip the brush in water, wipe it off on a paper towel, and dip itself in a new color. I think that the different colored lines make the images a little easier to understand, and give them a bit more depth.

I tried to record a wide variety of motion capture data for this project (thanks to several more talented volunteers) including ballet, other dance, gymnastics, parkour, martial arts, and me tripping over things. Unfortunately, I had some technical difficulties the first night of MoCap recording, so most of that data ended up unusable (extremely low frame rate). The next night, I got much better data, but I discovered later that Breckle really is not good with upside down (or even somewhat contorted) people. This made a lot of my parkour/martial arts data come out a bit weird, and I had to select only the best ones to print. If I were to do this project again, I would like to record Motion Capture data in Hunt Library perhaps, or just with a slightly better system than the one I used for this project. I think I would get somewhat nicer pictures that way.

One more aspect of my code that I want to point out is a little portion of code I made that maps the data to be an appropriate size for the paper. It runs at the beginning, and finds the maximum and minimum x and y values reached by any body part. Then, it scales that data to be as large as possible (without messing up its original proportions) while still fitting inside the paper’s margins. This means that a really tall motion will be scaled down to be the right height, and then have its weight shrunk accordingly, and a really wide motion will be scaled by its width, and then have its height shrunk accordingly. I think that this was an important feature.

Here are some of the images generated by my code:

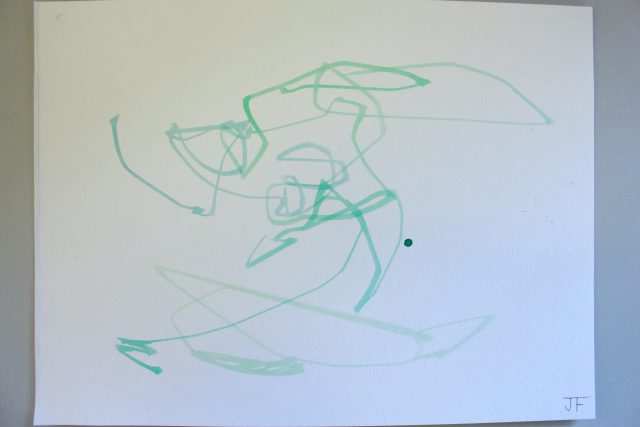

Above are three pictures of the same motion capture data: a pirouette. It was the first motion I painted, and it took me a few tries to get the paper’s size coordinates right, and to mix the paint dark enough.

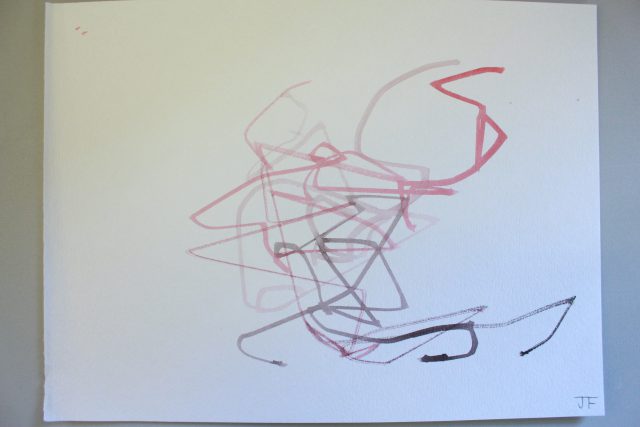

That’s an image generated by a series of martial arts movements, mostly punches. Note the dark spot where some paint dripped on the paper; I think little “mistakes” like that give these works character, as if they weren’t painted by a robot.

This one was generated by a somersault. I think when he went upside down, the data got a bit–messed up, but I like the end result nonetheless.

Here is a REALLY messed up image that was supposed to be a front walkover. You can see her hands and feet on the right side, but I think when she went upside down, Breckle didn’t know what to do, and put her body parts all over the place. I don’t really consider this one part of my final series, and since I knew the data was messy, I wasn’t going to paint it, but I had paint/paper left over so I figured, why not? It’s interesting anyway.

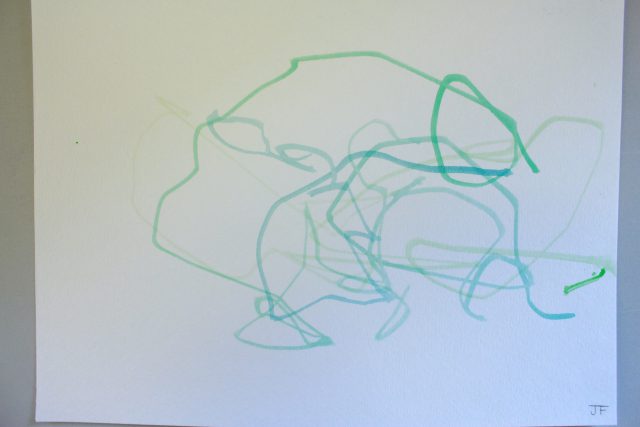

I really like these. The bottom two are actually paintings of the same data, just with different paint, but all four are actually paintings of the same dance move– a “Pas De Chat.” I got three separate BVH recordings of the dancer doing the same move, and painted all of them. I think it’s really interesting to note the similarities between them, especially the top two.

All in all, I am super happy with how this project turned out. I would have liked to get a little more variety in (usuable) motion capture data, because I love trying to trace where every limb goes during a movement (you can see some of this in my documentation video above). I also think that a more advanced way of capturing motion capture data would have been helpful, but what can you do?

Thanks for a great semester, Golan.

Here is a link to my code on Github: https://github.com/JacquiwithaQ/Interactivity-and-Computation/tree/master/Motion_Tracer