“Think about an interactive artwork…which you find inspirational”

Wow. Choice paradox much? How does one choose? And what does it mean to be inspirational for me? Because there are many different types, and I feel the need to break that down.

Some interactive work I find inspirational because there’s an aspect that I want to be able to grow towards — to aspire to (whether it be high level of craft, narrative, execution), but that doesn’t mean that I find the project as a whole to be inspired.

There are pieces I admire for being outstanding and brilliant as “last word” art, but I don’t know if they have as much power moving the collective intellect or emotional landscape — which I might argue is more important to “inspired” work. (Why might I say that? Well, I’m personally very bored by modern photorealistic paintings. My first impression of them is just that they showcase the artists’ “outstanding” technical skill at observation and representative replication, but most of the initial feeling of ‘awe’ from the audience feels cheaply won because it’s not unique to the piece….simply a byproduct of admiration for that general skillset…and it’s skill in a technique that isn’t novel or niche anymore.)

And then I wonder, how does my choice here reflect on what I prioritize?

Lol. I hope no one reads too deeply into it— so here is me showing that I’ve reflected on the assignment…and then discarded my musings because it’s just the first looking outwards assignment, thus I should take a chill pill and save my words for the actual juice, so here goes:

I love their craft. This is the level of work I aspire to.

INORI (Prayer) from nobumichi asai on Vimeo.

I find it inspirational because, well yes, a lot of the initial value might be the wow factor of the super fast tracking and projection (latency in the milliseconds!) but it takes advantage of the technology to create a dance that has great rhythm (visual and otherwise) and surrealism. The mixing of the dancer’s physical features with the virtual projections as a design choice does well to augment the ‘surrealism’ that comes with an emerging/or fairly novel technology.

This is how it was made:

INORI (prayer) / Making from nobumichi asai on Vimeo.

Does it move me emotionally? Sure, as much as any well executed dance or film piece might. But intellectually?…..perhaps, but it’d be subtle and I’d have to really take the time to reflect on why this piece was appealing to figure that out. It certainly isn’t nearly as obvious an answer as say…

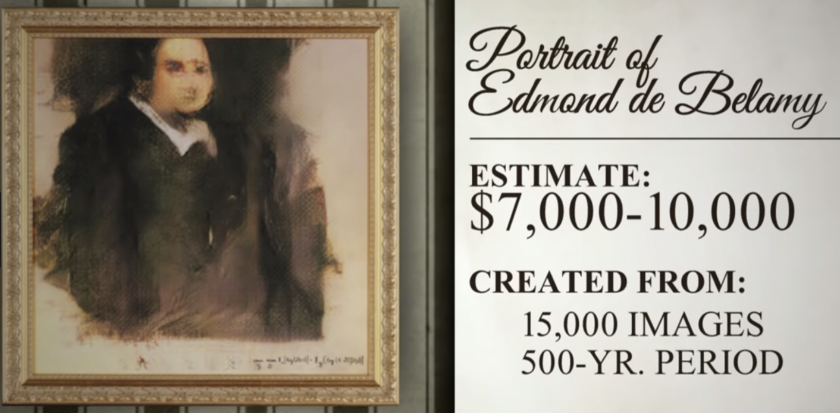

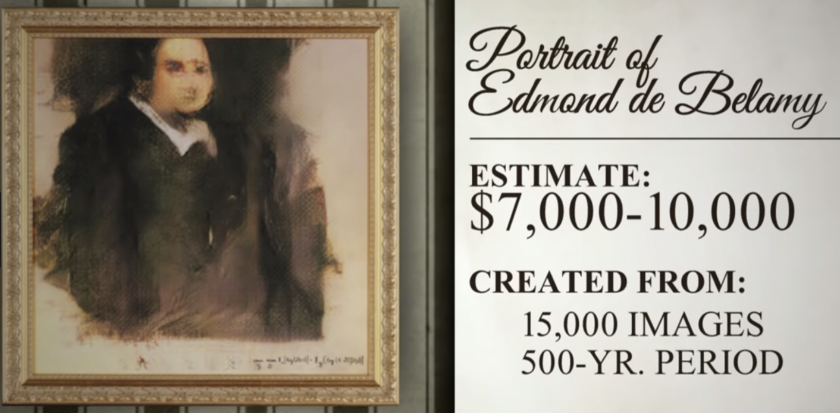

..this “AI artwork” that sold for half a million at Christie’s Auction:

A-collaboration-between-two-artists-one-human-one-a-machine

![]()

Instead of a painter’s author signature, in the corner is an innocuous math formula.

These pieces raises a lot of questions that I don’t have answers to immediately. The French artist collective, “Obvious” reaped the monetary acknowledgement of ‘authorship’ but they didn’t write the code that made the AI. A lot of it is built of Google’s research and some American developers GitHub (that they also didn’t credit)

Not to mention, they most definitely aren’t the first of their kind as they seem to claim.

Ok, So what is their time and effort put into this considered if not authorship? I wouldn’t give the artistic or creative credit to the AI, because there was no initiative behind it without a person driving it’s efforts. Someone put thought behind the data set this was trained on (old master paintings) and while the exact results weren’t things the artist might have thought of explicitly, they aren’t exactly unexpected either are they? But then that also raises an interesting point….were the artists then simply curators? But by that logic, they didn’t author this, they censored a generator, and were awarded for having some degree of taste. For training the AI, are they simply the biological bootloaders for the actual creator? The AI? I wouldn’t necessarily say that either…because regardless of authorship or creative drive, I feel at least comfortable to say I”d agree that they are responsible for this instance of an image modeled like an old master’s painting and ‘signed’ by a formula in Christies’ gallery.

Perhaps its the context that makes it art? That one would take this instance with its unique process outside of the niche corners of Twitter (with plenty of creators in this medium that have arguably better technique and novel work ex:Mario Klingemann) and frame it as if it were last word art in one of the art world’s most prestigious auctions?

From that perspective…there are, at least on the surface, strong parallels with the Ready-Made pieces of Dada artists.

hmm…..welp. Things to think about. Either way I’m glad this piece did what it did, even if the artists felt more like hype marketers because it’s interesting to think about and sometimes it really is just about asking the interesting questions and making others do that too.

…..certainly it’s done more to move people than any of my piece so.

….

yeah so please enjoy this and come talk to me about your thoughts on this topic! Maybe this whole thing is just semantics but those are important to finesse too!