A compilation of some of our experimenting throughout the week. This includes playing with the sequencer, place two oscillators one after another, and also our generative melodies.

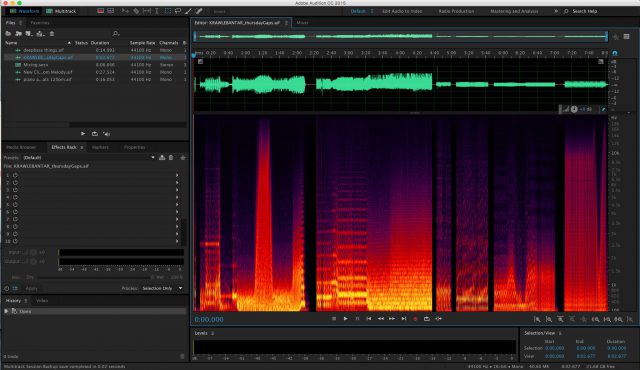

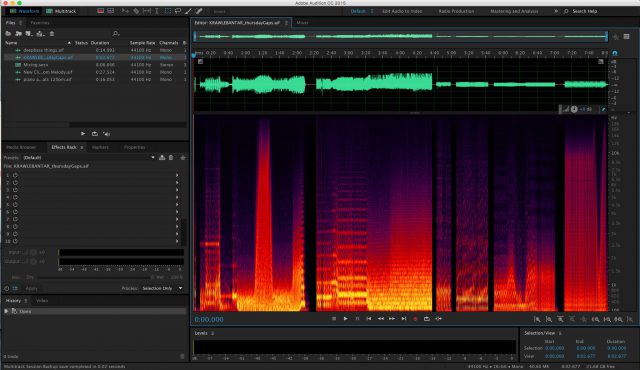

This is the view from Audition, from when we were mixing our soundcloud track together.

This is the visualizer that we used to help our debugging process. The top bar being the melody, and the bottom being the base line.

This is the visualizer that we used to help our debugging process. The top bar being the melody, and the bottom being the base line.

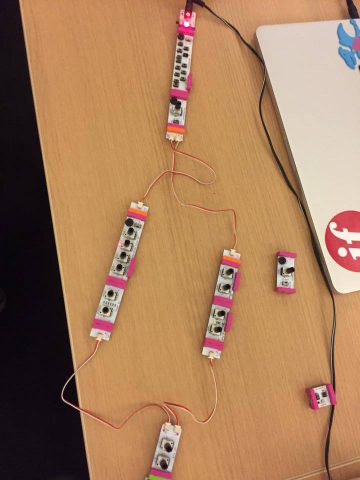

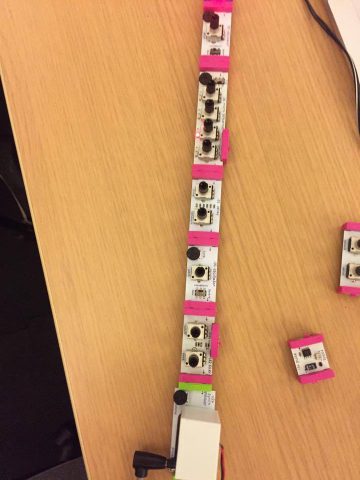

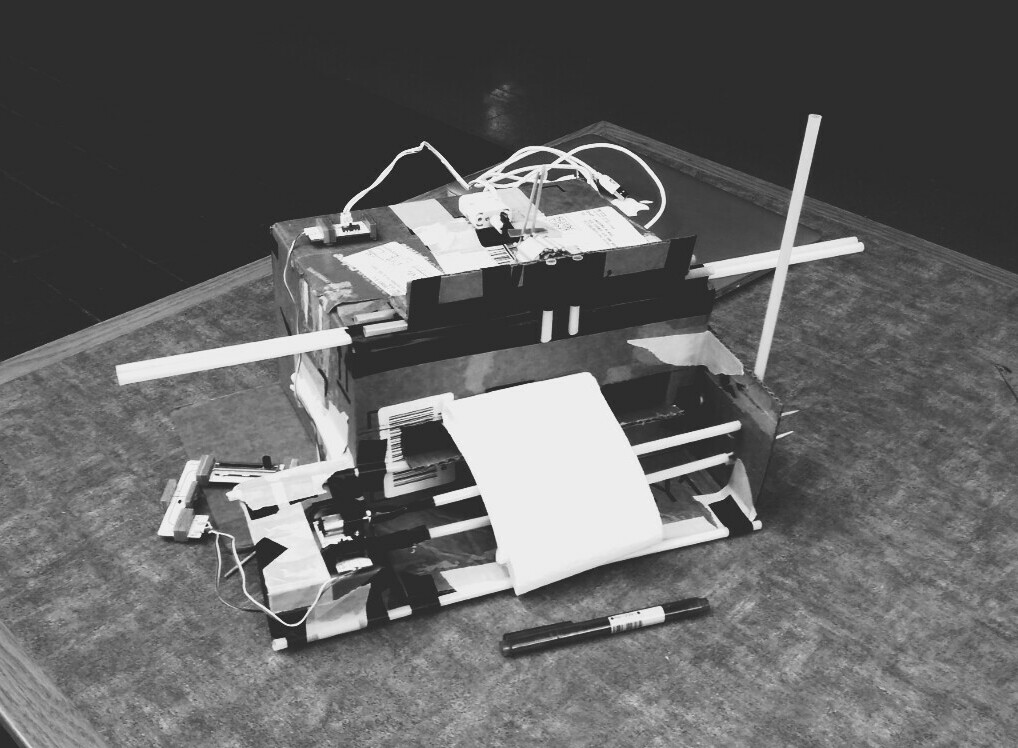

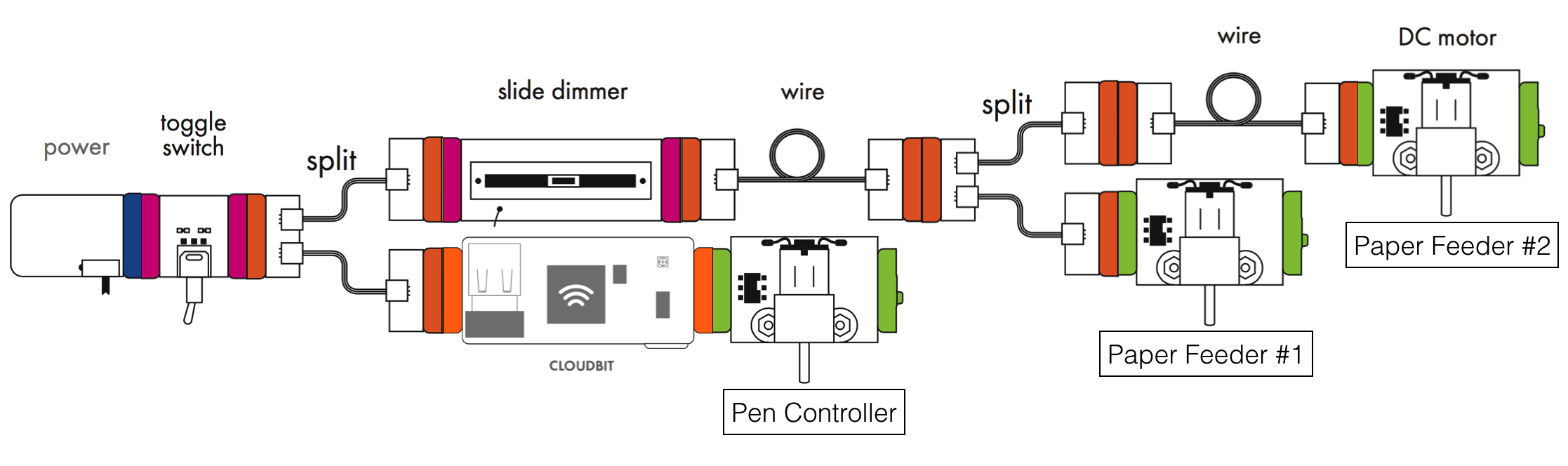

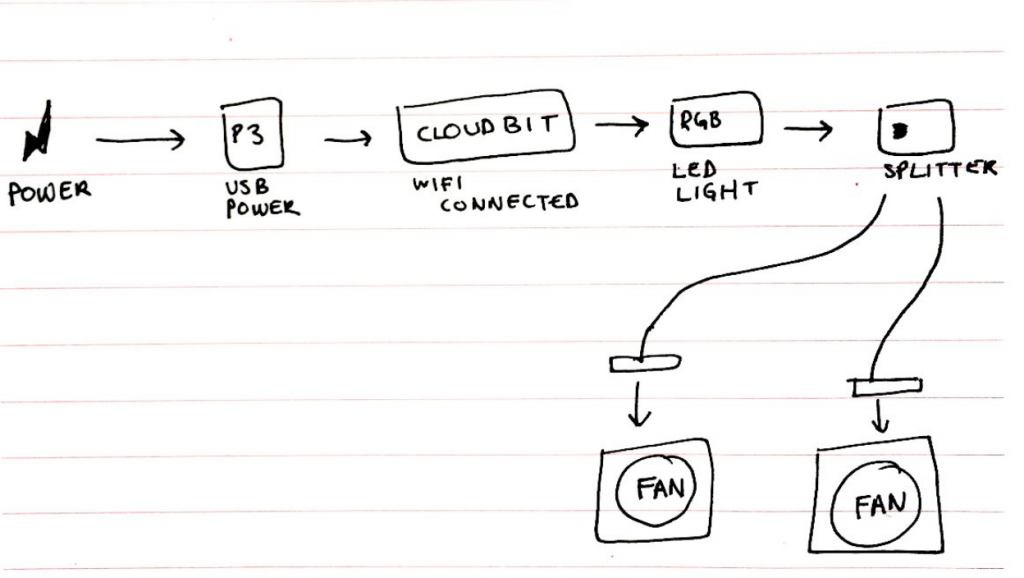

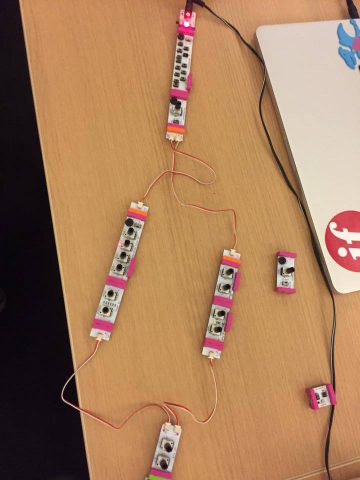

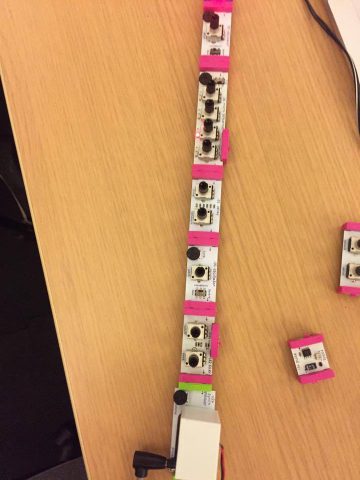

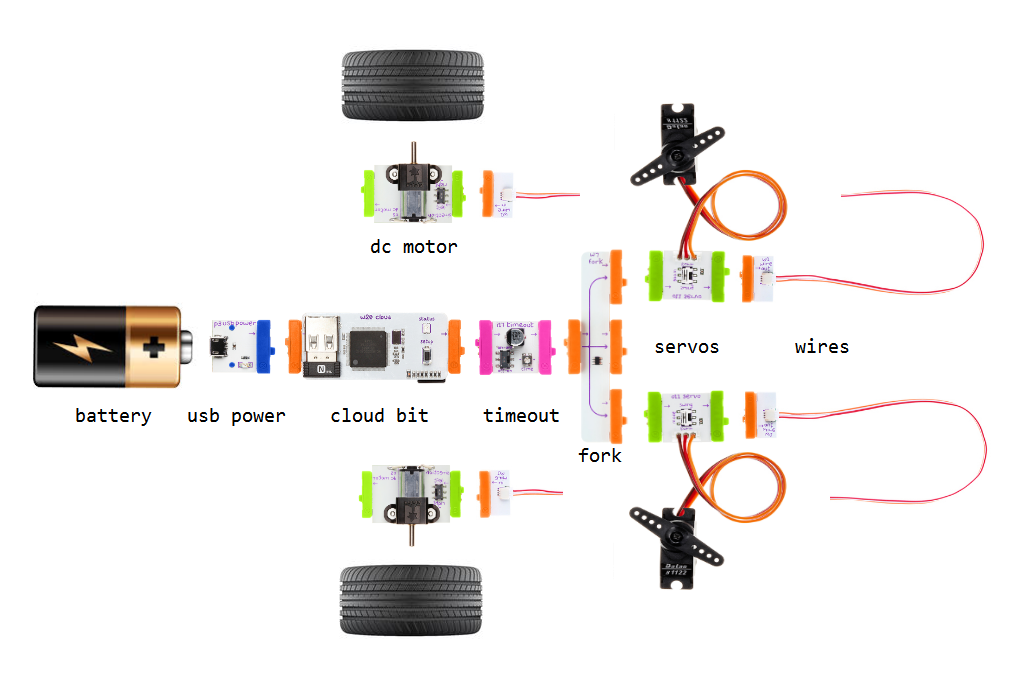

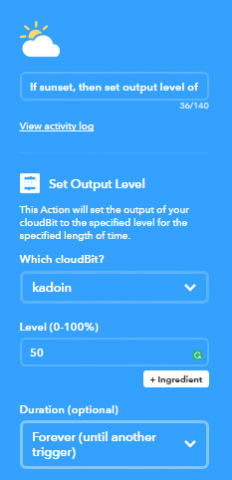

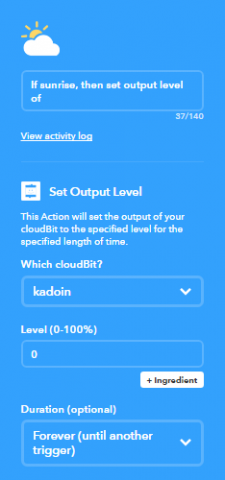

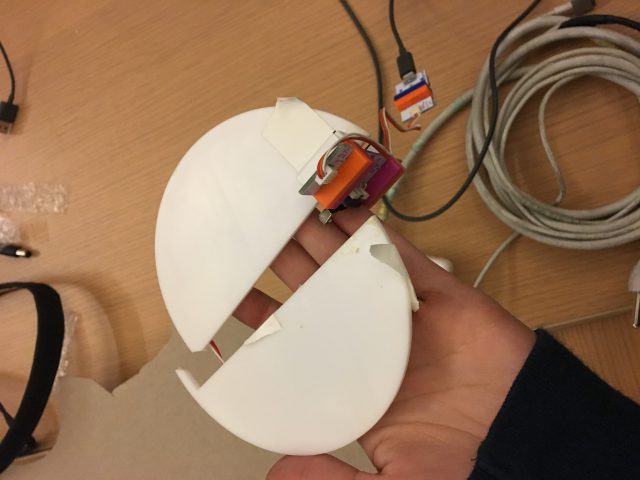

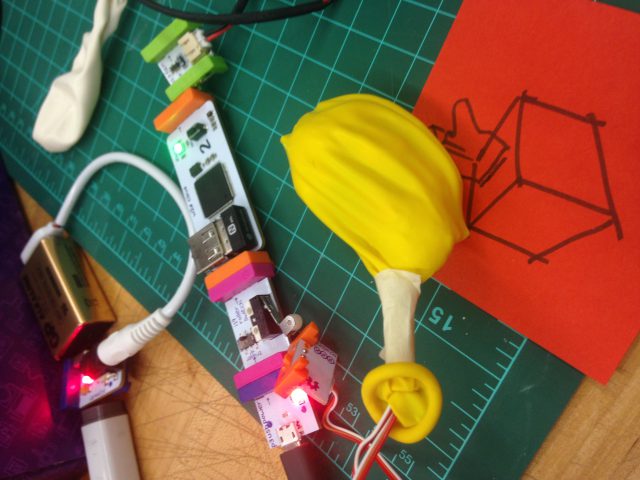

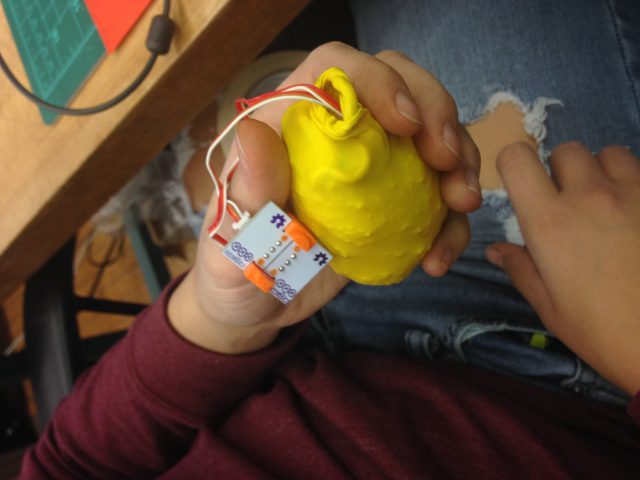

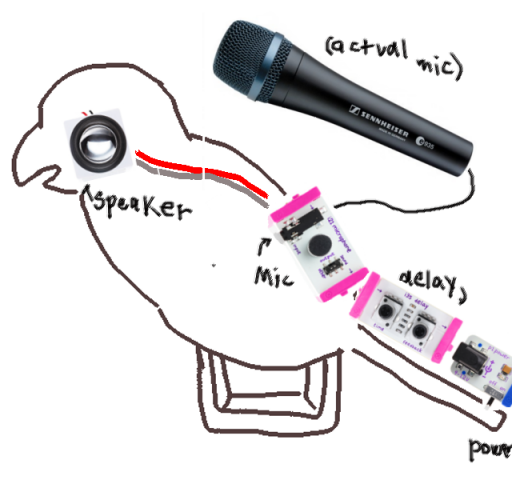

Here are some of the little bit arrangements we used.

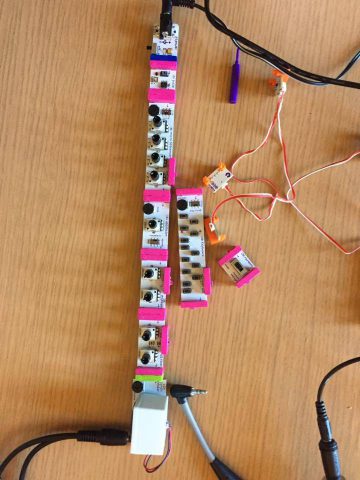

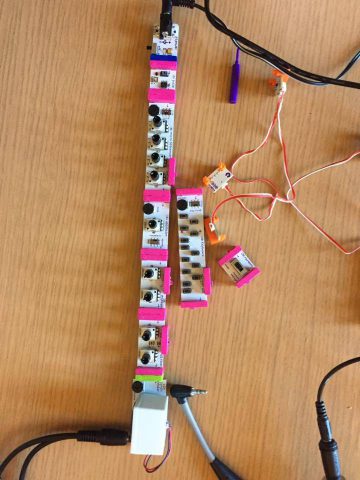

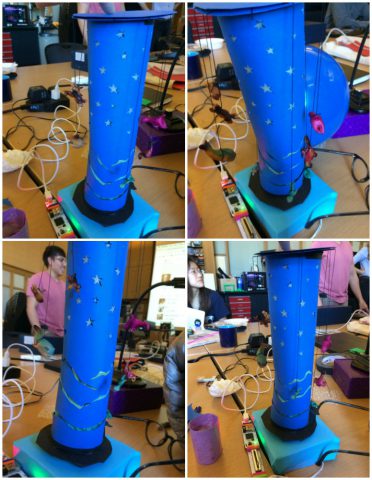

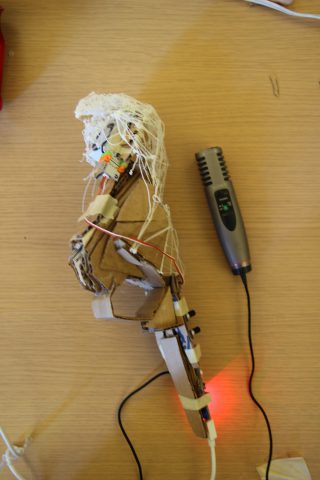

This is our computer which is connected to the mp3 piece. The music is then manipulated through a filter and a delay, then played out the speaker which is connect to a splitter. One branch of the splitter goes to the headphones, and the other to the microphone, which is also attached to the computer. The tracks were first recorded with Audacity, then mixed with Audition.

Little Bits pieces

- power

- oscillator

- mp3 out

- midi in/out

- synth speaker

- filter

- delay

- envelope

- keyboard

- pulse

- noise

- sequencer

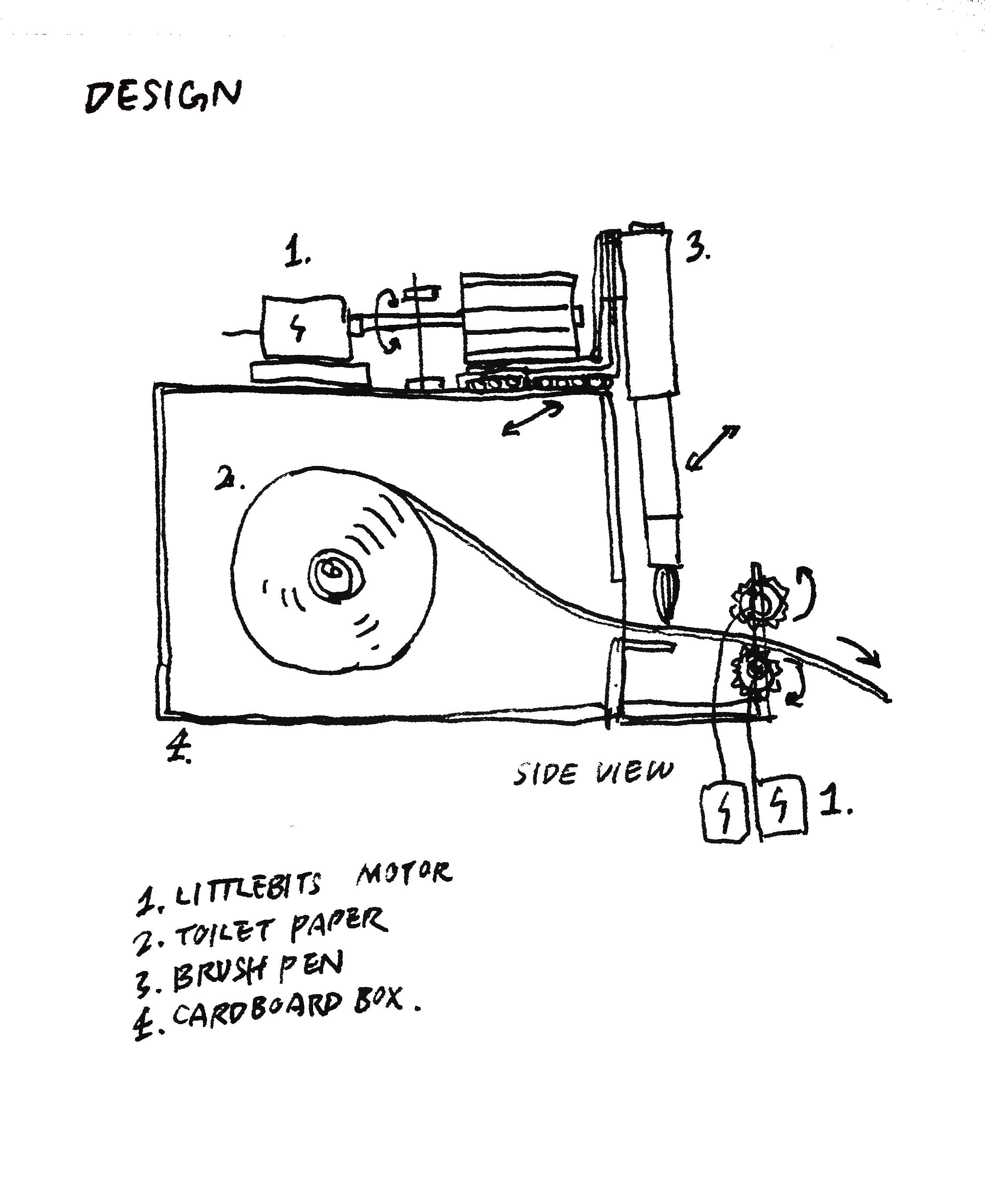

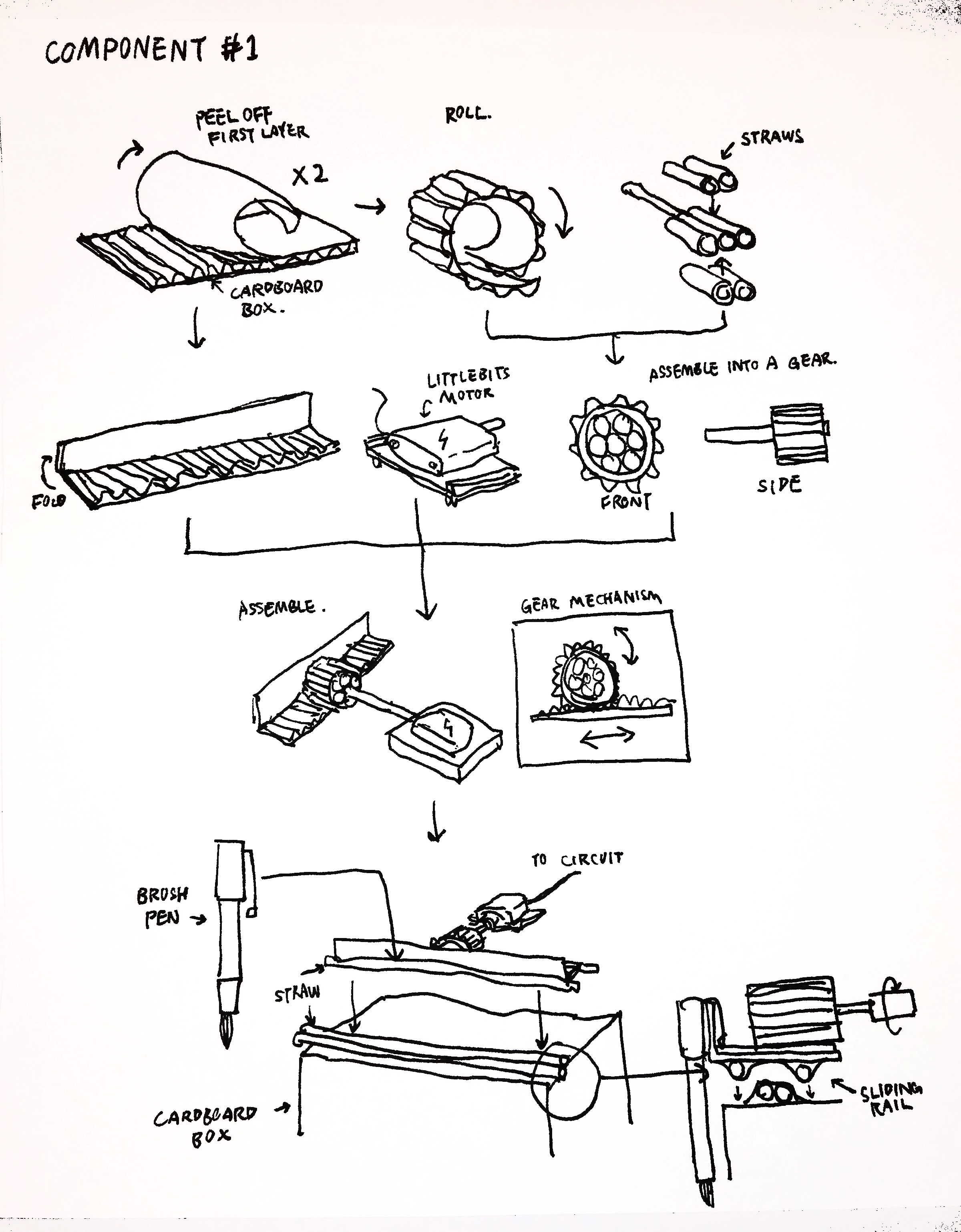

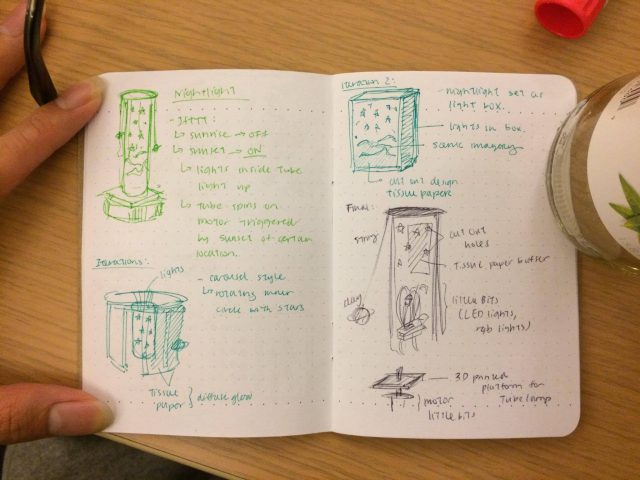

Initial Concept

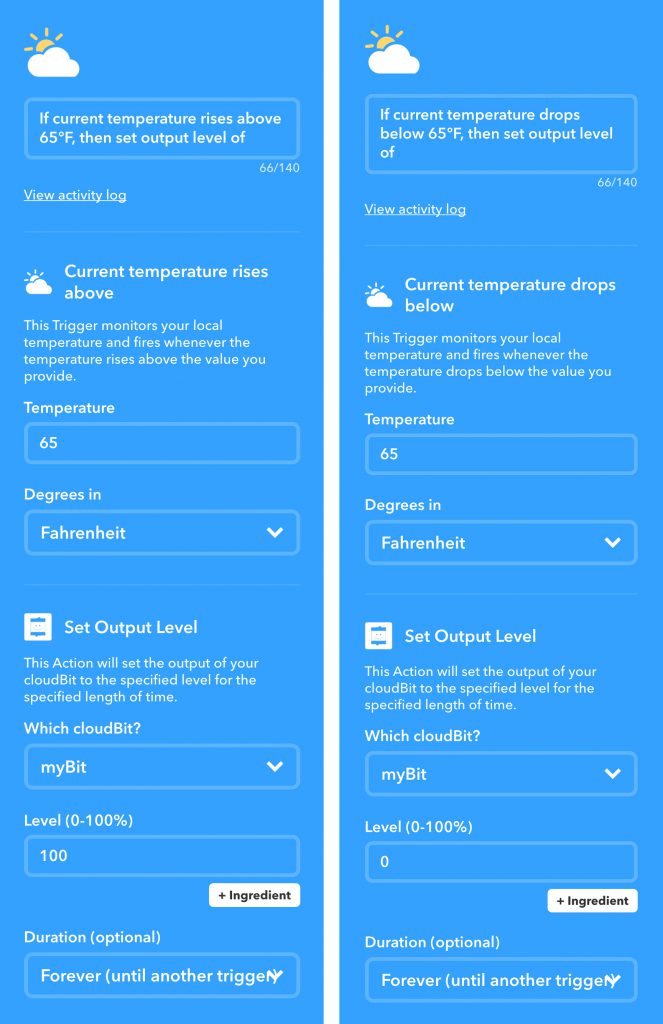

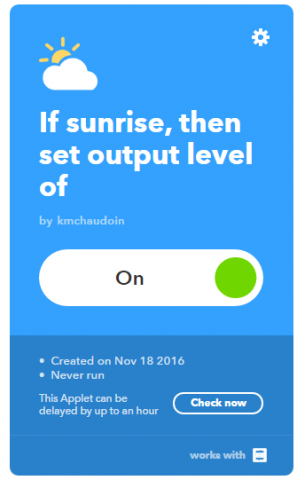

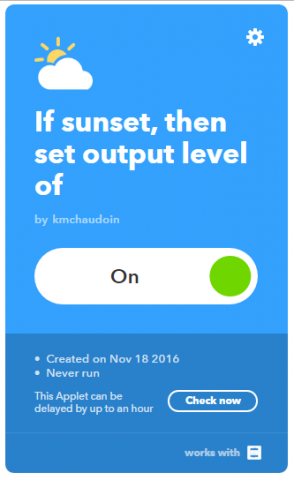

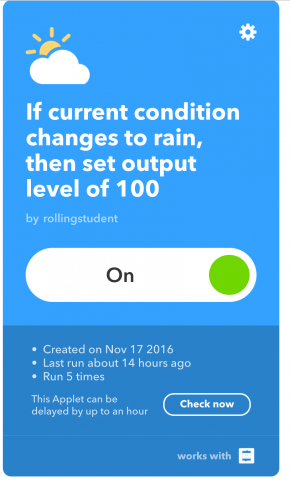

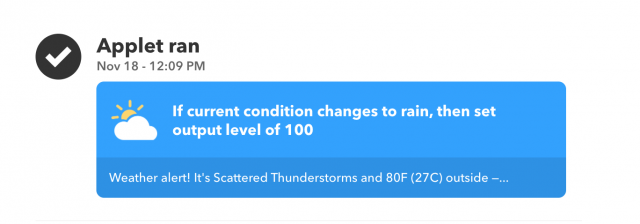

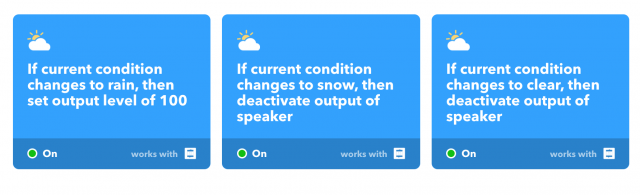

We both immediately got excited over the Korg synth Little Bits pieces, and knew that we wanted to see how far we could push the affordances of the hardware. At the beginning we thought of only creating music collaboratively, one on the keyboard piece, and one manipulating the sound through playing with cutoffs, feedback loops, and attacks/delays. Our first instinct was to work with Makey Makey to use IFTTT. The general idea was to have a jamsesh and when we fell into a rhythm that we liked, we would hit the Makey Makey to begin recording, then hit it again to stop recording and to upload the track to Soundcloud. However, after some investigation, we realized the limitations of IFTTT and that we would rather spend time trying to learn new ways to create music, rather than to dwell over uploading issues.

2nd Iteration

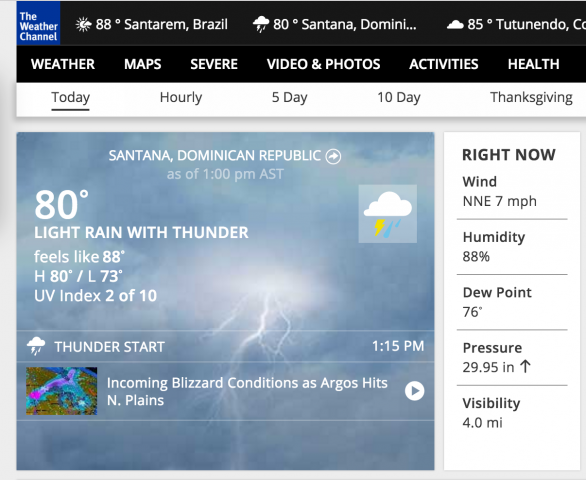

Deciding to focus on the music, we discovered the mp3 Little Bits piece. This piece allowed create music through p5js, which we could then modify and manipulate the way we had been for the music generated with the Little Bits pieces. Our concept was that we could have a stream of melodies being generated through our program, and we could have a collaborative jamsesh between the coded music, and our own improvisations on the little bits pieces. After some brainstorming, we came up with an idea to translate strings, possibly scraped from places like Twitter or NYTimes, into melodies, but in a very meta way. The program would translate any letter from A-G into their corresponding note, if the character was not one of those letters, it would simply hold the previous note, or rest. If given a string such as “a ce melody“, our program would translate it into AAAACEEEEEEDD. This required us to also to lean about the p5 sound library, and also create our own scale using an array of midi numbers.

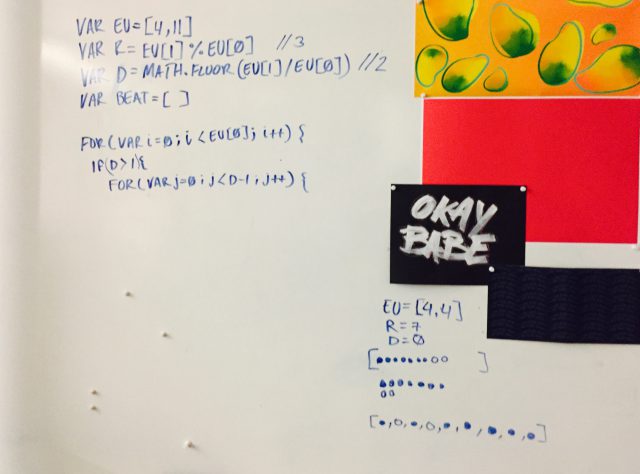

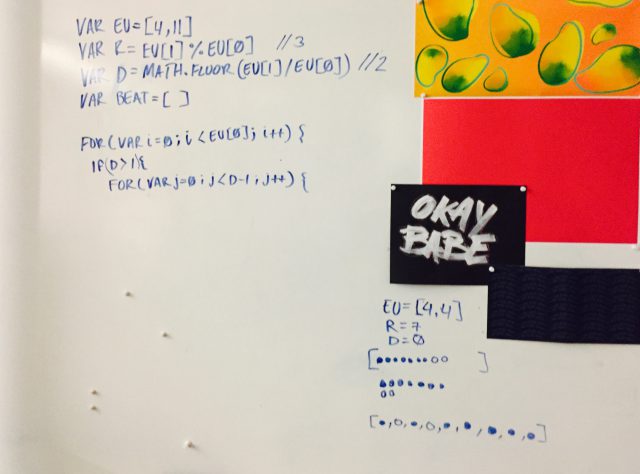

We wanted our music to have some depth so we figured we could also generate a baseline. If we were using the content of the article or the tweet as the melody, then the username or author’s name could be used for the baseline. This was when we had the pleasure of learning about Euclidean rhythms (Godfried Toussaint). Given two numbers, the algorithm can create a beat made by evenly distributing the smaller number into the larger number. Some of these patterns can be found in traditional music, such as E(3,8). “In Cuba it goes by the name of the tresillo and in the USA is often called the Habanera rhythm” [Toussaint]. We would then use the mp3 Little Bit to bring in the melody, and use the midi Little Bit for the baseline, which would enable us to manipulate the two pieces differently.

Unfortunately, because the melody was following patterns within the string, it wasn’t really following any rhythmic time at all. This made it difficult to align it to any baseline. Though we thoroughly enjoyed coding and learning about euclidean rhythms, we ended up not using this algorithm.

3rd Iteration

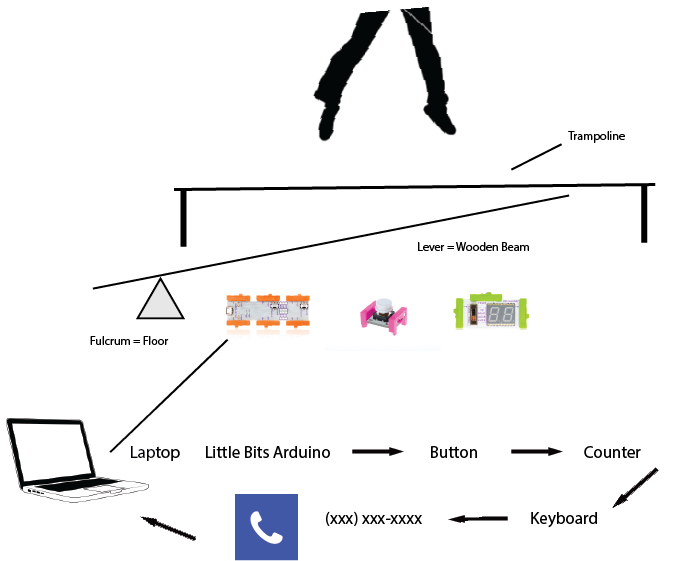

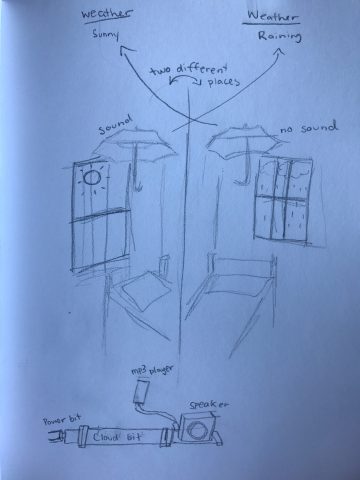

Once we had finalized our melody code, we began to set up all of our pieces. We connected Krawleb’s computer with an aux cord to the mp3 little bits piece, to play our coded melodies into the little bit. We then attached a filter and a delay to the mp3 and connect them to the speaker. We used a splitter connected to the speaker, with one branch connected to a microphone that recorded into Antar’s computer, and the other branch connected to headphones. We were really excited to start manipulating the melodies and adding in our own improvised music, but we quickly discovered the limitations of the Little Bits. Unfortunately, the audio would have these sharp clips, that sounded like the speaker was blowing out. At first we thought one of the bits was broken, but after testing each piece individually, we realized that it was not a single piece that was causing this, but rather the quantity. When we added a 10th piece to our path, no matter which piece it was, we would have this error. Unfortunately to be able to do any sort of collaboration with our generative melody, we would need to have at least 10 pieces connected. We decided to then simply record our manipulated melody, then, separately, record us creating our own music.

Through the project we felt some other limitations of the Little Bits, such as overheating, weak power, sound compression, and a fatality (a delay blew out). We spent a ton of time making really interesting music, but failed to record most of it. In retrospect, we should have began recording earlier, and much more frequently, so as to have a larger library to mix with. We should have also incorporated a simple networked component.

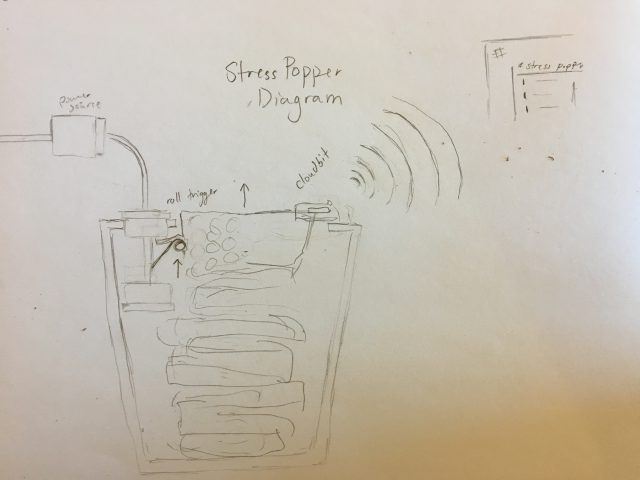

Diagram of the StressPopper. The power source is connected to a roll trigger, which it triggered every time a piece of bubble wrap is pulled out. The trigger is connected to a CloudBit, which activates the IFTTT Applet that posts to the #stresspopper Slack channel

Diagram of the StressPopper. The power source is connected to a roll trigger, which it triggered every time a piece of bubble wrap is pulled out. The trigger is connected to a CloudBit, which activates the IFTTT Applet that posts to the #stresspopper Slack channel

This is the visualizer that we used to help our debugging process. The top bar being the melody, and the bottom being the base line.

This is the visualizer that we used to help our debugging process. The top bar being the melody, and the bottom being the base line.