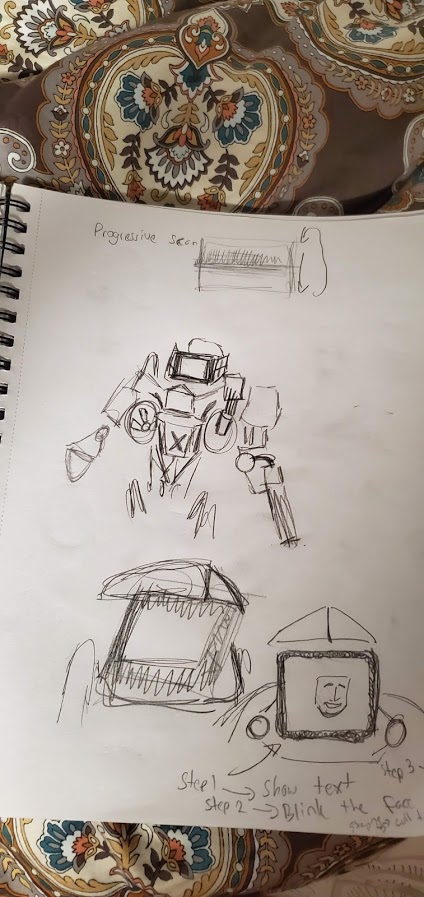

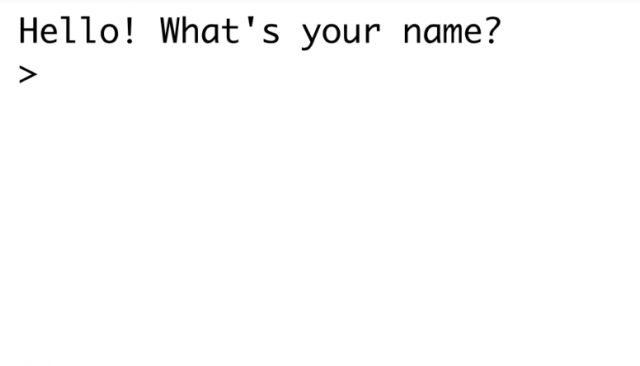

I made an annoying robot that withholds a cute picture from you as long as it can. It's based in a terminal.

I wanted it to be a little unclear how much this robot knew, and at one point it asks you to do something and then doesn't even check if you've done it at all, it just moves on regardless. But I also just wanted to see how much you can make somebody work if you just slowly add more and more tasks. It's like the sunk-cost fallacy; they've come this far so they can't stop now. It's just a prototype, and I'd like it polished up and longer, but it captures the idea, I think.

Here are some videos of people playing it:

Here's the link to the project: https://editor.p5js.org/gray/sketches/XQDS1U6gE