10. The interface uses metaphors that create illusions: I am free, I can go back, I have unlimited memory, I am anonymous, I am popular, I am creative, it's free, it's neutral, it is simple, it is universal. Beware of illusions!

Propositions:

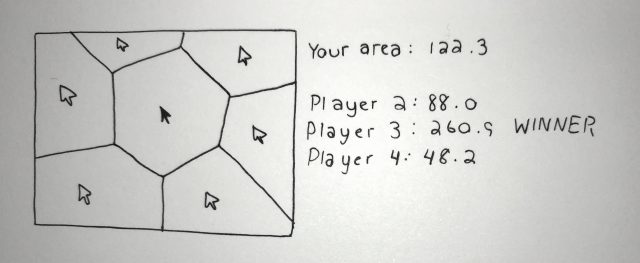

Imagine your desktop is a kitchen, a garden, a hospital, a computer. Now, imagine it using no metaphor.

Call 5 random facebook friends and ask them for money.

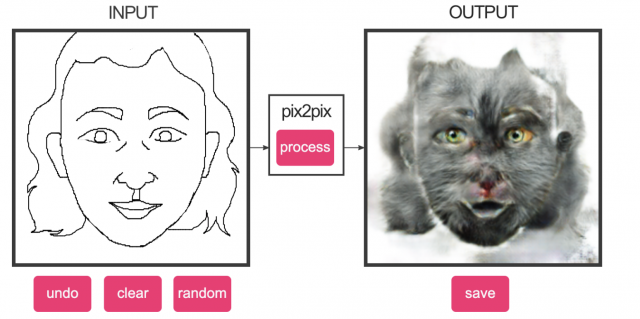

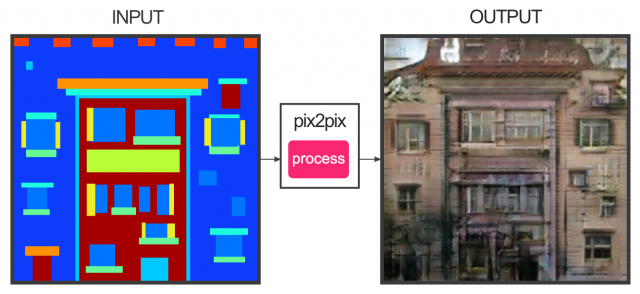

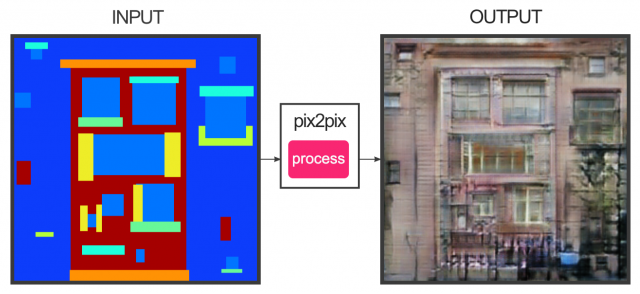

Perform Ctrl+Z on real life. Invent new gestures to bring digital possibilities to oral conversations.

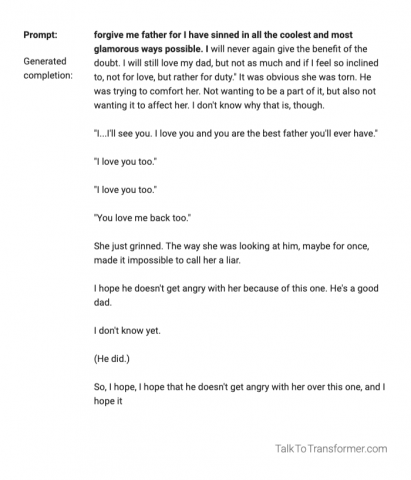

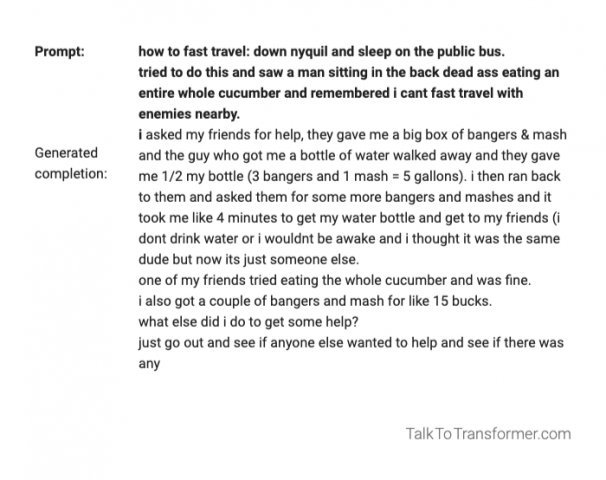

Some of my favorite tenets were 3, 7, 9, and 13, but 10 was my absolute favorite. I first realized the extent to which interfaces create illusion back when iOS switched from skeumorphism to flat design in 2012, and it's been weirdly haunting to think about ever since. On one level, illusions include how the Contacts app on iOS used to look like a Rolodex with realistic colors, textures, and sound design. That was a visual illusion that imbued the interface with a sense of productivity, utility, and authority. Now the Contacts app is a matte white Apple Interface (TM) like any other, but this is another kind of illusion as described in the tenet -- one of "free"-ness, "neutrality", "simplicity", and "universality." In truth, it is not any of these for everyone anywhere (not even 'simple'! Maybe it's simple for me, but it might not be for an elderly person in Mongolia.)

But it goes beyond one app on one OS to the entire collective understanding of how an interface should work. The 'trash', the idea of 'documents' and the 'folders' they're stored in, and especially 'windows' and the 'desktop' are such fascinating metaphors that were invented in very specific contexts by very specific (probably white straight male) software engineers in the '80s (other than Susan Kare, she is cool.) The ability to "go back" or 'refresh' your situation, the idea that you have established another degree of human relationship by becoming 'friends' on Facebook, it's all an illusion.

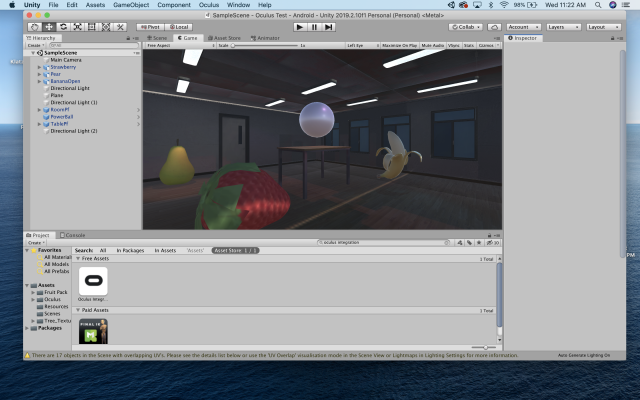

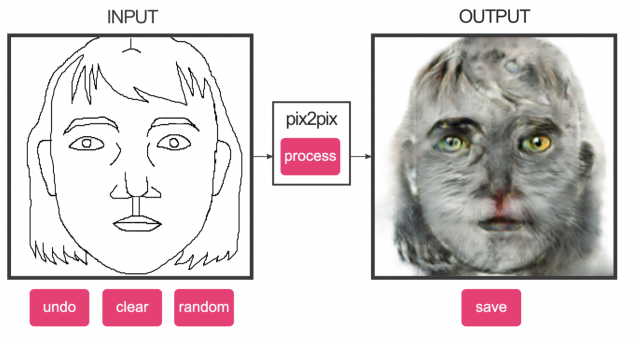

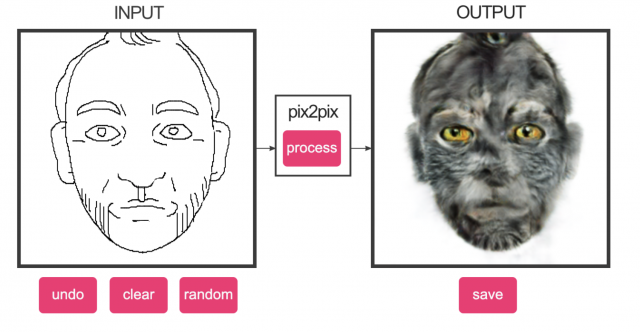

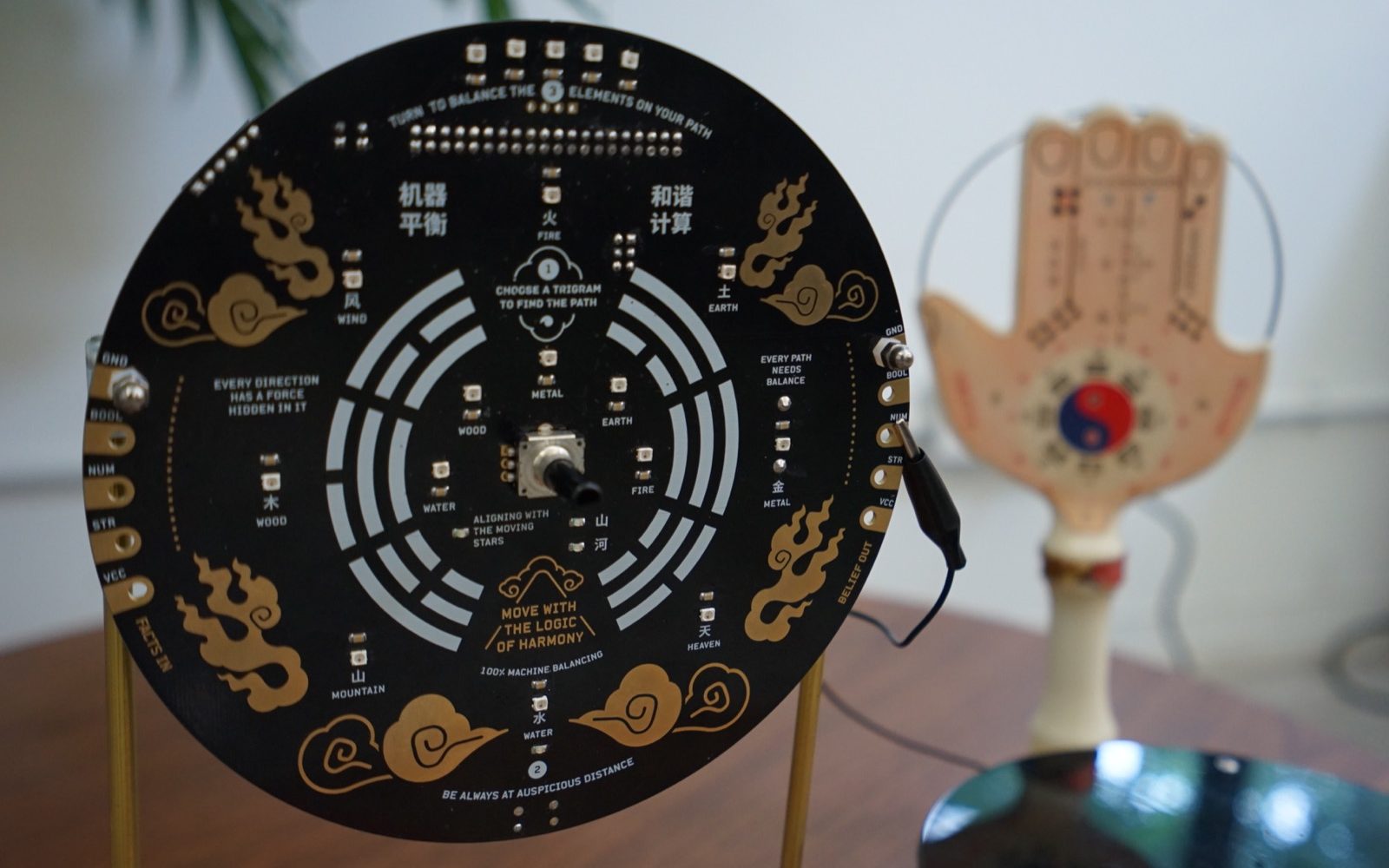

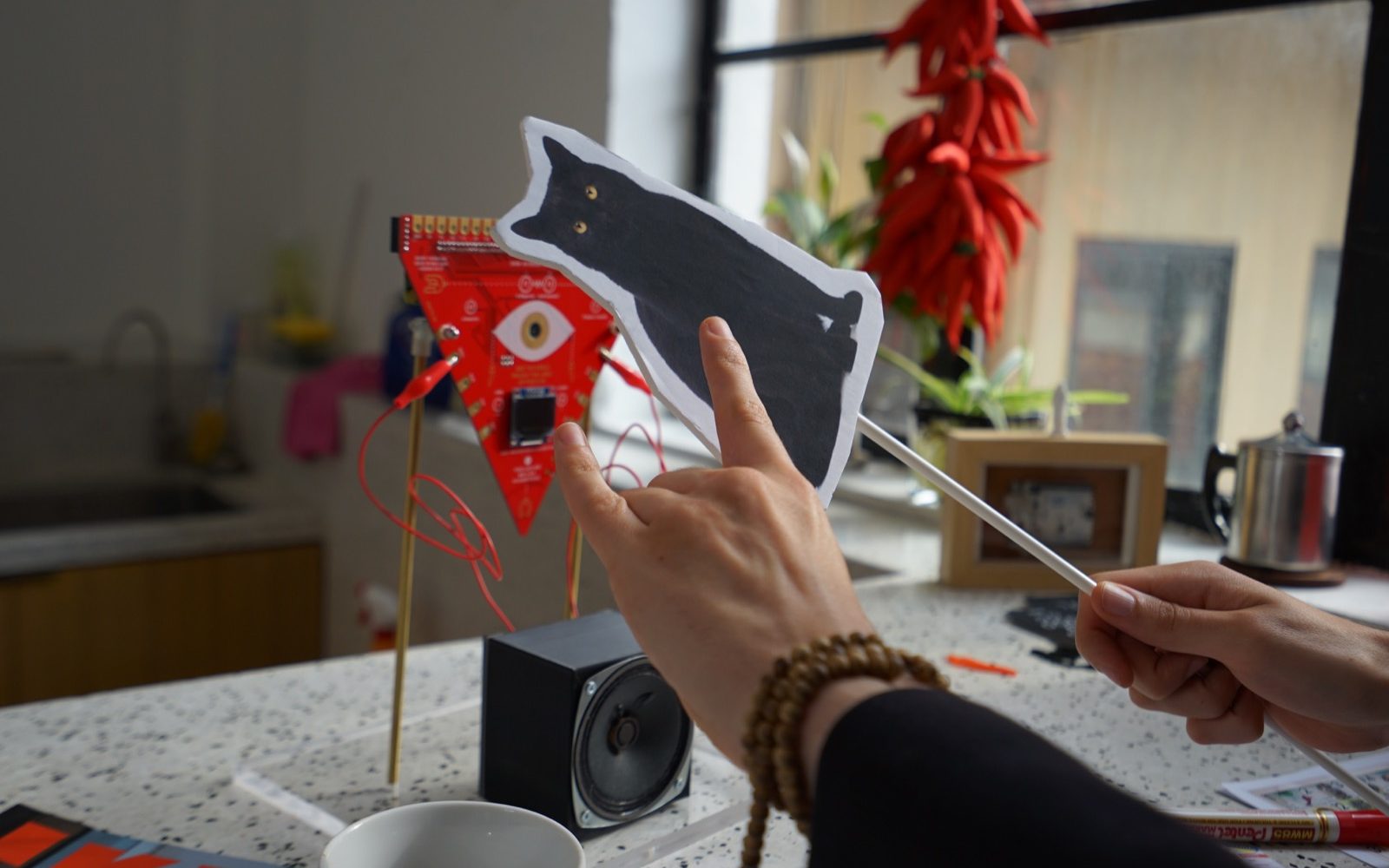

As an aspiring (sort of) UX designer, I want to know how I can use my deeper understanding of how interfaces are constructed to make art that is critical of them.